Meet Kimi K2-Instruct

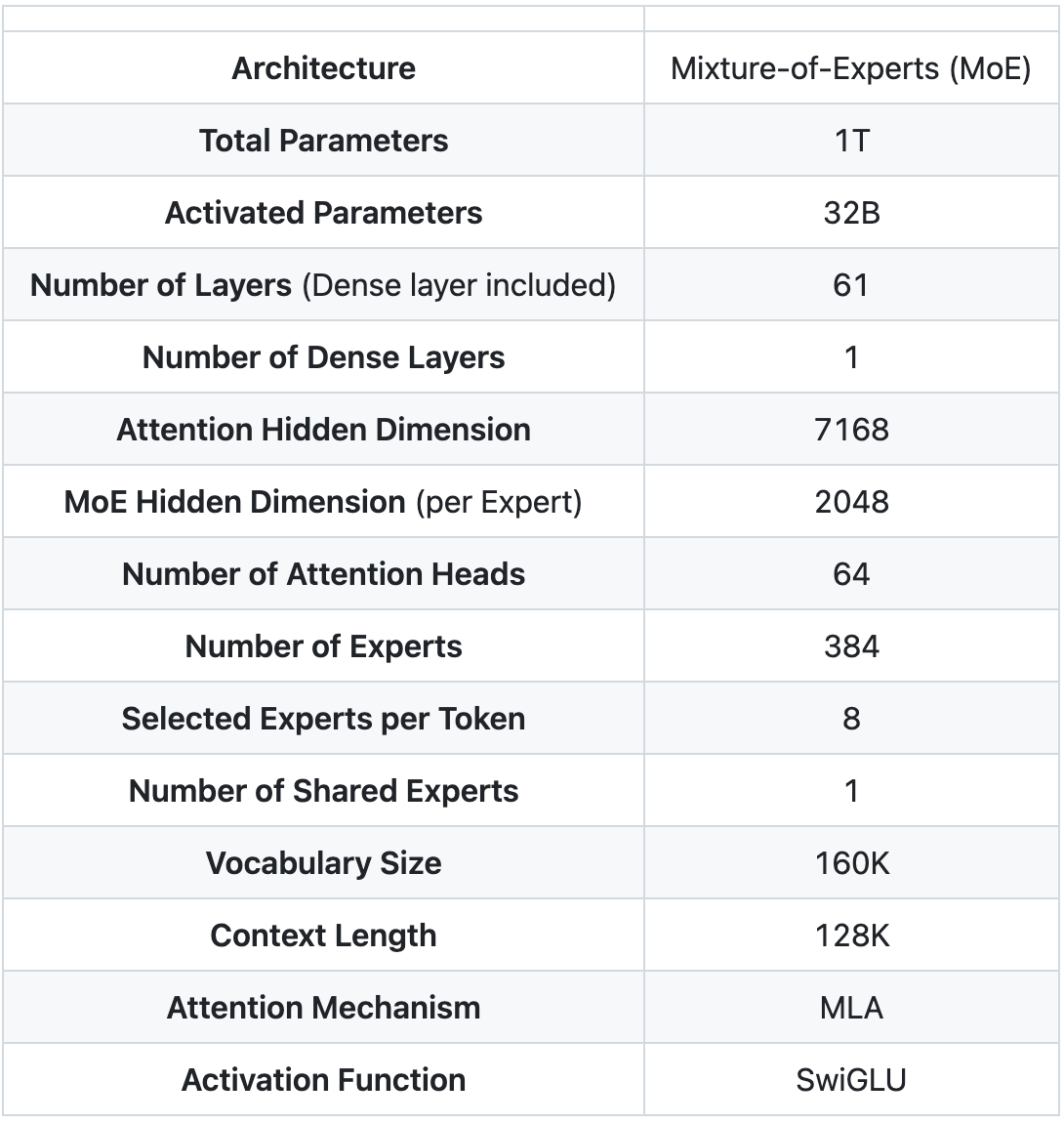

Kimi K2 is a 1‑trillion‑parameter Mixture‑of‑Experts model from Moonshot AI. The Instruct model is now fully integrated into the GMI Cloud inference engine. It comes with 32 billion active parameters, 1 trillion total parameters and a 128 k‑token context window. Trained with the MuonClip Optimizer, Kimi K2 achieves exceptional performance across frontier knowledge, reasoning, and coding tasks while being meticulously optimized for agentic capabilities. Check out their GitHub here.

Why Kimi K2?

Kimi K2 was developed by Moonshot AI, a frontier AI research lab based in China. Moonshot is focused on building competitive open models with practical applications, particularly in long-context memory and multi-modal learning. Kimi K2 is their most advanced offering to date and reflects their broader mission of making cutting-edge AI research openly accessible to the world.

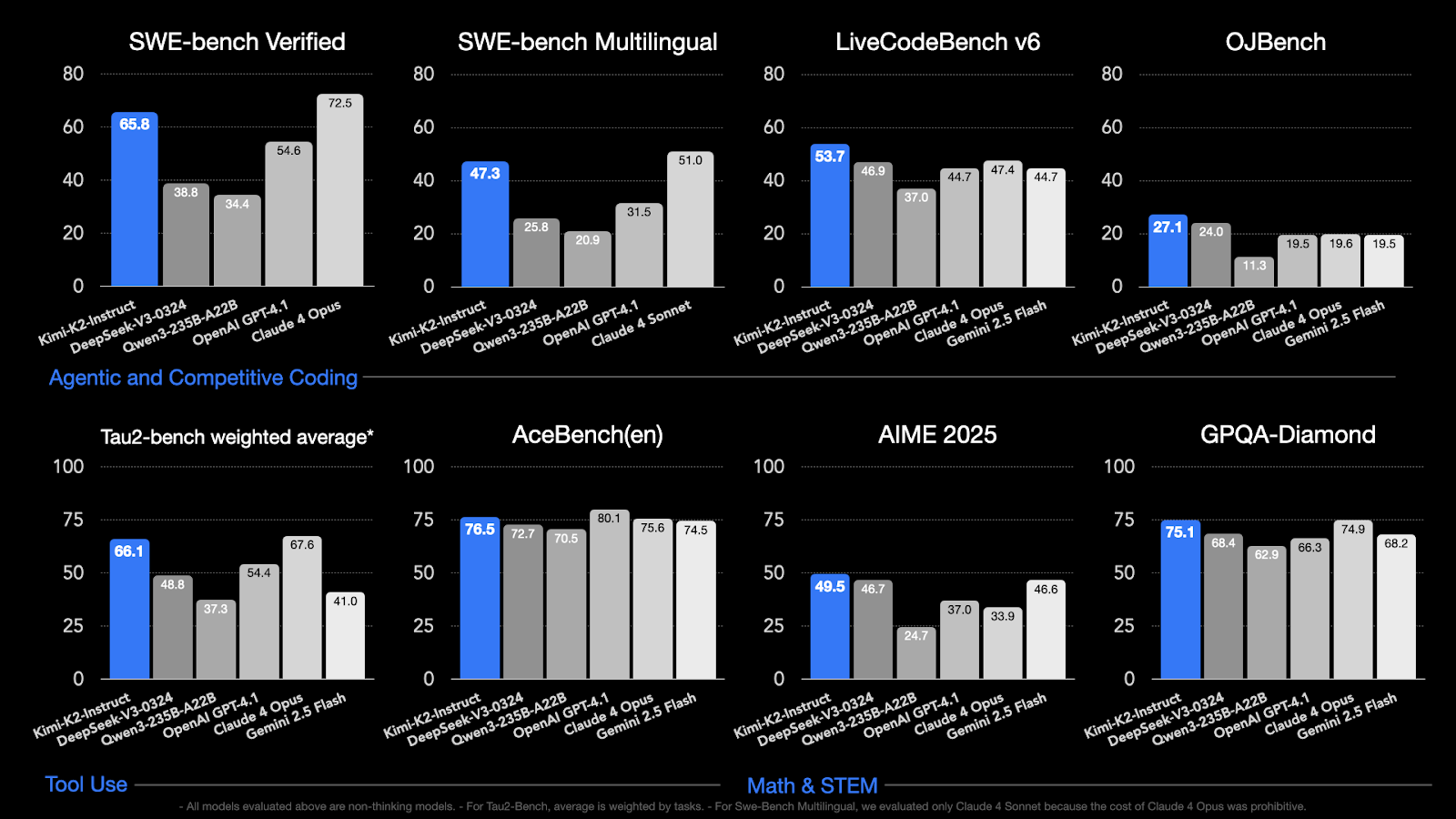

Here's the benchmarks for how it compares with other models in the wild:

Run Kimi K2 on GMI Cloud

You can deploy Kimi K2 immediately through our inference engine by following the instructions here.

GMI Cloud provides the infrastructure, tooling, and support needed to deploy Kimi K2 at scale. Our inference engine is optimized for large-token throughput and ease of use, enabling rapid integration into production environments.

Teams using GMI Cloud can:

- Serve Kimi K2 via optimized, high-throughput inference backend

- Configure models for batch, streaming, or interactive inference

- Integrate with prompt management, RAG pipelines, and eval tooling

- Connect via simple APIs without additional DevOps effort

- Scale with usage-based pricing and full visibility into performance

At GMI Cloud, we’re excited to offer access to Kimi K2 because it unlocks a new level of long-context reasoning for teams building research assistants, legal AI, financial analysis tools, and other high-memory applications. We see Kimi K2 as a core model for anyone looking to build intelligent systems that need to reason over vast, interrelated information.

Model Overview

Technical Overview

GitHub Repository

Get Started

Kimi K2 is now available on GMI Cloud for research and production use. Whether you're building AI agents, enterprise workflows, or RAG applications, GMI Cloud makes it easy to deploy and scale long-context models like Kimi K2.

Explore Kimi K2 on GMI Cloud Playground

About GMI Cloud

GMI Cloud is a high-performance AI cloud platform purpose-built for running modern inference and training workloads. With GMI Cloud Inference Engine, users can access, evaluate, and deploy top open-source models with production-ready performance.

Explore more hosted models → GMI Model Library

Frequently Asked Questions

1. What is Kimi K2-Instruct and what makes it unique?

Kimi K2-Instruct is a 1-trillion-parameter Mixture-of-Experts model developed by Moonshot AI, with 32 billion active parameters and a 128 k-token context window. It is optimized for agentic use cases and delivers strong performance across reasoning, coding, and frontier knowledge tasks while remaining efficient at inference time.

2. Why is Kimi K2 particularly well-suited for agentic and long-context applications?

Kimi K2 is trained with the MuonClip Optimizer and designed to reason over large amounts of interconnected information. Its long context window and strong reasoning benchmarks make it well suited for AI agents, research assistants, legal analysis tools, financial modeling, and other applications that require deep context retention and multi-step reasoning.

3. How does Kimi K2 perform on key benchmarks?

According to the article, Kimi K2 achieves a 65.8% pass@1 score on SWE-bench Verified and 47.3% on SWE-bench Multilingual. These results place it in the same performance band as models like Claude-4 and GPT-4 for agentic coding and reasoning tasks.

4. What does “open weights” mean for teams using Kimi K2?

Kimi K2 is released under a modified MIT license that allows commercial use, fine-tuning, and self-hosting. This gives teams flexibility to adapt the model to their own workflows, deploy it in production, and build proprietary applications without restrictive licensing constraints.

5. How does GMI Cloud support deploying Kimi K2 at scale?

GMI Cloud’s inference engine is optimized for large-token throughput and ease of integration. Teams can deploy Kimi K2 immediately, configure it for batch, streaming, or interactive inference, integrate it with RAG pipelines and evaluation tools, and scale usage with transparent, usage-based pricing and full performance visibility.

Build AI Without Limits

GMI Cloud helps you architect, deploy, optimize, and scale your AI strategies

FAQ

.png)