Model as a Service (MaaS): Building AI products without rebuilding your stack

March 24, 2026

Model as a Service (MaaS) transforms how teams build with AI by turning models into modular, interchangeable components that can be integrated, scaled and optimized without infrastructure complexity.

Key things to know:

- Why model integration – not model quality – is the real bottleneck in production AI systems

- How traditional setups create friction when swapping, scaling or upgrading models

- What MaaS is and how it abstracts models behind stable APIs for easier access and orchestration

- How MaaS enables faster iteration by removing deployment, provisioning and integration overhead

- Why model flexibility improves output quality by allowing teams to mix, match and upgrade models continuously

- The role of intelligent routing in reducing costs by using the right model for each task

- How MaaS supports multimodal workflows across image, video, audio and text generation

- Why decoupling models from application logic enables long-term system stability and evolution

- How MaaS turns AI from isolated experiments into scalable, maintainable production systems

- Why combining MaaS with workflow-based creation unlocks speed, quality and cost efficiency at scale

As generative AI becomes part of real products, teams are discovering a new bottleneck that has nothing to do with model quality. The challenge isn’t finding powerful models – it’s integrating them, swapping them, scaling them and maintaining them over time without turning every experiment into a DevOps project.

This is where Model as a Service (MaaS) changes the equation. Instead of treating models as tightly coupled infrastructure components, MaaS turns them into modular building blocks that can be accessed, replaced and orchestrated through a consistent interface. For teams building with AI – especially across image, video, audio and multimodal workflows – this shift unlocks speed, flexibility and cost efficiency at once.

Why traditional model integration slows teams down

Most AI teams start with a single model. They wire it into an application, tune prompts, optimize inference and ship. But momentum stalls as soon as requirements evolve.

A better model appears. A different modality is needed. Performance changes under load. Suddenly, swapping models means reconfiguring infrastructure, rewriting integrations, retraining teams and revalidating deployments. What should be a creative or product decision becomes a systems problem.

This friction compounds quickly in generative workflows, where multiple models often interact. Image generation feeds video pipelines. Audio synthesis synchronizes with visuals. Embeddings support retrieval and personalization. When every model change ripples through the stack, iteration slows – and velocity suffers.

What Model as a Service actually provides

Model as a Service abstracts model access behind a stable API. Teams don’t manage deployments, environments or dependencies for each model. Instead, they pull models on demand, route workloads dynamically, and swap implementations without touching the surrounding application logic.

From a workflow perspective, this means creators and builders can focus on what they want to generate, not how the model is hosted. The same pipeline can run with different models depending on cost, quality, latency or availability – without refactoring the system.

This modularity is the foundation for efficient AI creation.

Faster iteration without technical drag

One of the most immediate benefits of MaaS is speed. Teams can test new models the moment they become available. There’s no provisioning phase, no redeployment and no environment drift.

This directly impacts creative velocity. Designers can compare outputs across models. Product teams can benchmark quality and cost tradeoffs. Engineers can route traffic based on performance metrics rather than assumptions.

The result is faster iteration with less overhead – quick without being reckless.

Better outcomes through model flexibility

No single model is optimal for every task. Some excel at realism, others at style. Some are optimized for speed, others for fidelity. In traditional setups, teams are often locked into early choices because switching is expensive.

MaaS breaks that lock-in. Models become interchangeable components within a workflow. Teams can combine them, chain them or replace them as needs evolve.

This flexibility improves quality over time. Instead of compromising early, teams can continuously upgrade outputs without rewriting systems – good without fragility.

Lower costs through reuse and routing

Cost optimization in AI isn’t just about cheaper GPUs. It’s about using the right model for the right job.

With MaaS, teams can route workloads intelligently. High-fidelity models handle hero assets. Faster, lower-cost models handle drafts, previews or background tasks. Multimodal workflows can mix and match models based on stage, not guesswork.

Because infrastructure and orchestration are handled centrally, teams avoid overprovisioning and eliminate idle resources. That’s how MaaS makes AI creation cheap in practice, not just in theory.

MaaS as the backbone of AI workflows

Model as a Service becomes even more powerful when paired with workflow-based creation. Instead of embedding model logic directly into applications, workflows reference models abstractly.

This means a single workflow can outlive any individual model. As better models emerge, the workflow stays intact. Only the underlying components change.

For creators, this feels like upgrading tools without relearning the process. For enterprises, it means long-term stability without stagnation.

MaaS enables continuous evolution, not one-time integration

One of the most underappreciated advantages of Model as a Service is how it changes long-term system evolution. In traditional deployments, model upgrades are disruptive events. They require coordination across engineering, infrastructure and product teams, often delaying adoption of better models even when they are clearly superior. MaaS removes this friction by decoupling model selection from application logic.

As new models emerge, teams can introduce them gradually, test them side by side, and shift traffic dynamically without destabilizing production systems. This enables continuous improvement rather than periodic rewrites – a critical capability as generative AI models continue to evolve faster than most software stacks.

How GMI Cloud approaches Model as a Service

GMI Cloud offers MaaS as part of a broader creation-first platform. Instead of forcing teams to wire models manually or manage deployments, GMI Cloud provides API-based access to a growing library of models that can be dropped into workflows instantly.

Models can be swapped in and out without rewriting application logic. Workflows remain stable even as underlying capabilities evolve. This approach eliminates the “wonky DevOps” phase that often slows AI projects to a crawl.

For teams using GMI Studio, MaaS becomes the connective tissue between creative intent and production execution.

Creation velocity as a business advantage

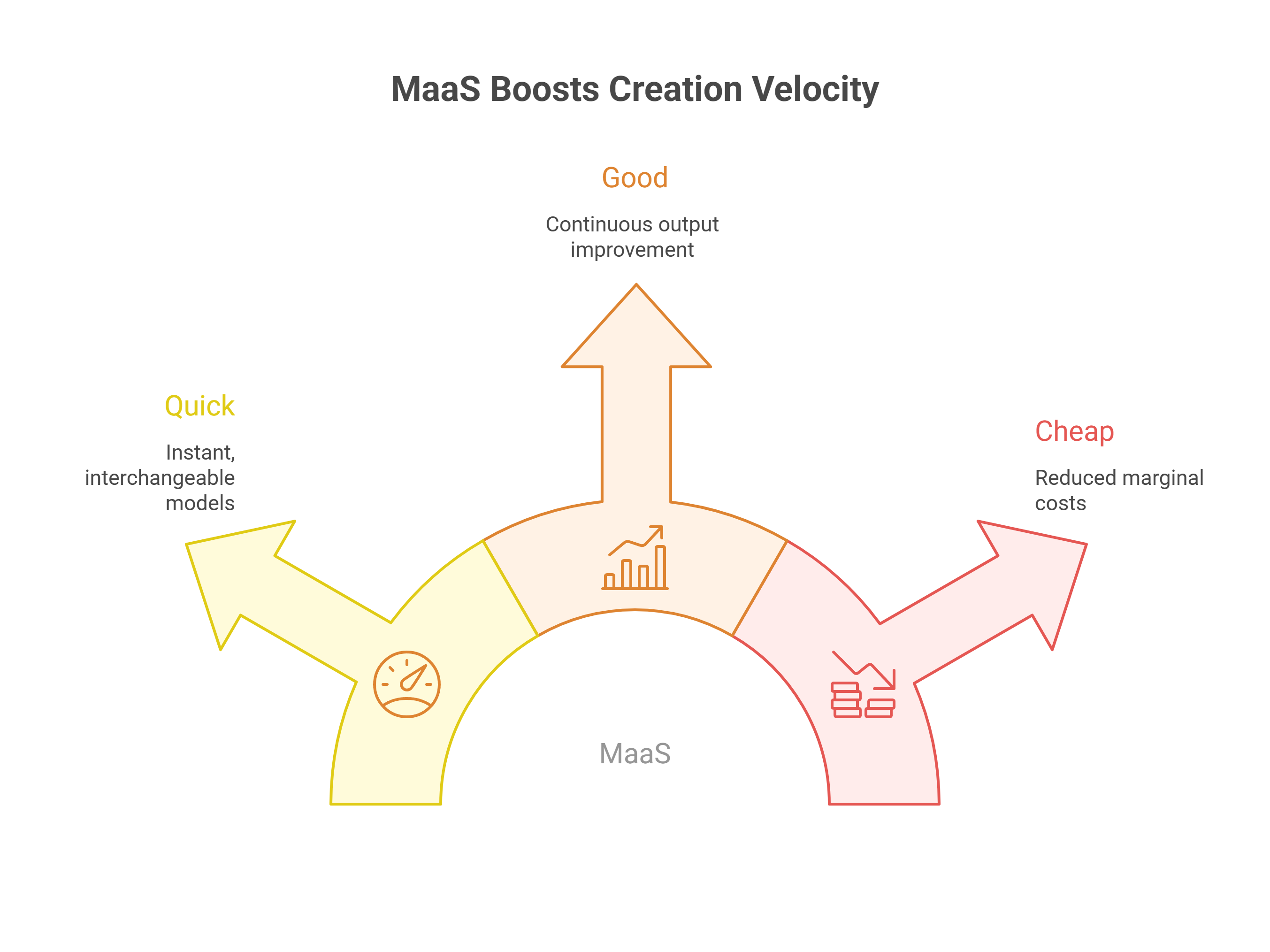

The old triangle of “quick, good, cheap – pick two” is breaking down in generative AI. Structured workflows combined with MaaS make it possible to approach all three simultaneously.

- Quick, because models are available instantly and interchangeable

- Good, because teams can continuously improve outputs without rework

- Cheap, because reuse and routing reduce marginal costs

This is the real business impact of MaaS. It’s not just a technical convenience – it’s a multiplier for creative and operational efficiency.

From experiments to systems

Many teams treat AI as a series of experiments. MaaS enables a different mindset: systems that evolve.

Instead of rebuilding pipelines every time a model changes, teams build durable workflows that absorb change gracefully. That’s how AI creation scales – not by freezing decisions, but by making change inexpensive.

Why MaaS matters now

As image, video and audio generation converge, the number of models involved in production workflows will only increase. Managing them manually is not sustainable.

Model as a Service provides the missing layer between raw model innovation and real-world creation. It allows teams to move faster, produce better results, and control costs – without becoming infrastructure experts.

For creators and enterprises alike, MaaS isn’t just about accessing models. It’s about unlocking velocity at scale.

Build AI Without Limits

GMI Cloud helps you architect, deploy, optimize, and scale your AI strategies

FAQ