AI Developer Events

Join engineers and founders building production AI systems on NVIDIA GPU infrastructure.

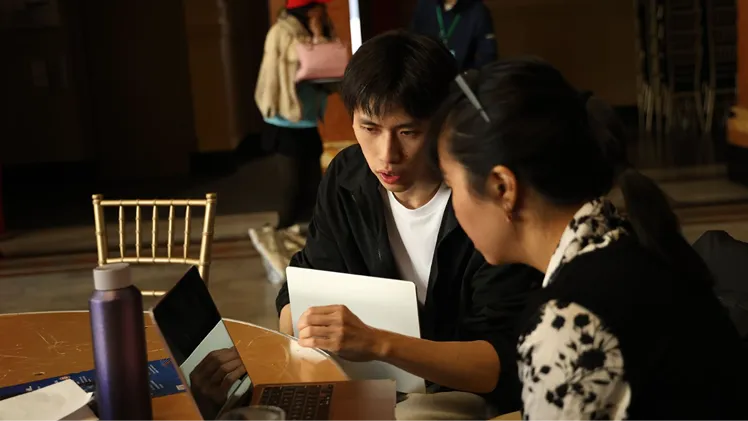

A Collaborative Hub for Production AI Developers

The GMI Developer Community brings together engineers and founders building production AI systems. Join technical sessions, hands-on workshops, and discussions on AI inference, GPU optimization, and real-world deployment.

Technical Support

Get your questions answered directly by the GMI team and experienced AI builders who have faced similar infrastructure challenges.

Exclusive Access

Be the first to know about new model integrations, infrastructure updates, and limited-access programs.

Knowledge Sharing

Participate in technical discussions on topics ranging from GPU selection for fine-tuning to deploying multi-modal models at scale.

Join the Community

AI Events

SCALE at GMI -- Accelerator Kickoff Day

GMI @ GTC -- Exhibition Booth & Live Demos

Client Stories x Builder Salon – GTC After-Hours Event

Next Generation Unicorns — AI Infrastructure & Cultural Power Panel

Signal '26 — GMI Studio Launch Summit

Hackathon at Signal '26 – Build. Ship. Show.

Accelerate Your Skills

Participate in expert-led sessions covering AI inference, GPU performance optimization, and production deployment strategies.

Hackathons

Hands-on AI development challenges focused on real-world inference use cases.

Masterclasses

Deep technical sessions on GPU optimization and production AI deployment.

Product Launches

Explore new GPU platforms and AI infrastructure capabilities.

Built for Production AI

Reliable NVIDIA GPU infrastructure designed for scalable AI inference and deployment.

Production-Ready Infrastructure

99.99% uptime SLA on core services and access to enterprise-grade GPUs.

Deploy with confidence, knowing your application is built on a reliable foundation.

Scalability & Performance

Scale from a single GPU to thousands in minutes with our auto-scaling inference endpoints.

Handle viral traffic spikes and large batch processing without manual intervention.

Cost-Efficiency by Design

Our pricing models can reduce inference costs by up to 70% compared to major cloud providers.

Allocate more of your budget to R&D and growth, not exorbitant infrastructure bills.

Developer-Centric Tools

A clean API, intuitive console, and integrations with tools like Terraform and Kubernetes.

Spend less time on infrastructure management and more time building your product.

Ready to Build on GMI?

Whether you're exploring a new AI concept, building your first product, or scaling a production system to millions of users, there's a place for you in the GMI ecosystem. Get started today with free credits and see why the next generation of AI companies is building on GMI.