DeepSeek V4 launched today with 1.6 trillion parameters, a 1 million token context window, and the top score on LiveCodeBench.

A year ago, DeepSeek R1 shook the entire AI industry. It matched OpenAI o1 reasoning performance at a fraction of the cost, went viral overnight, and sparked a conversation about whether expensive, closed-source models were even worth it anymore.

Today, DeepSeek did it again.

DeepSeek V4 launched on April 24, 2026, and it is not an incremental update. It is a generational leap, the kind of release that makes you rethink what you expect from an open-source model.

Here is everything you need to know, plus what we actually built with it in the lines we tested so far.

What DeepSeek V4 Actually Is

DeepSeek shipped two models today: V4-Pro and V4-Flash. Both are open-source under MIT license. Both have a 1 million token context window by default, not as a premium add-on, not with a surcharge, just included.

V4-Pro is the flagship. It runs 1.6 trillion total parameters with 49 billion active per token, trained on 33 trillion tokens. V4-Flash is the leaner sibling at 284 billion total parameters, priced at $0.14 per million input tokens.

| V4-Pro | V4-Flash | |

|---|---|---|

| Total Parameters | 1.6 trillion | 284 billion |

| Active Parameters per Token | 49 billion | 13 billion |

| Context Window | 1 million tokens | 1 million tokens |

| Input Pricing (cache miss) | $1.74 per 1M tokens | $0.14 per 1M tokens |

| Output Pricing | $3.48 per 1M tokens | $0.28 per 1M tokens |

| Best For | Deep reasoning, repo-level analysis, security audits | Fast generation, CI pipelines, high-volume APIs |

The Superpowers of This Model

This is where it gets interesting. V4 supports three reasoning modes: Non-think for speed, Think High for moderate reasoning, and Think Max for full deep reasoning effort. Think Max is where the model becomes something else entirely.

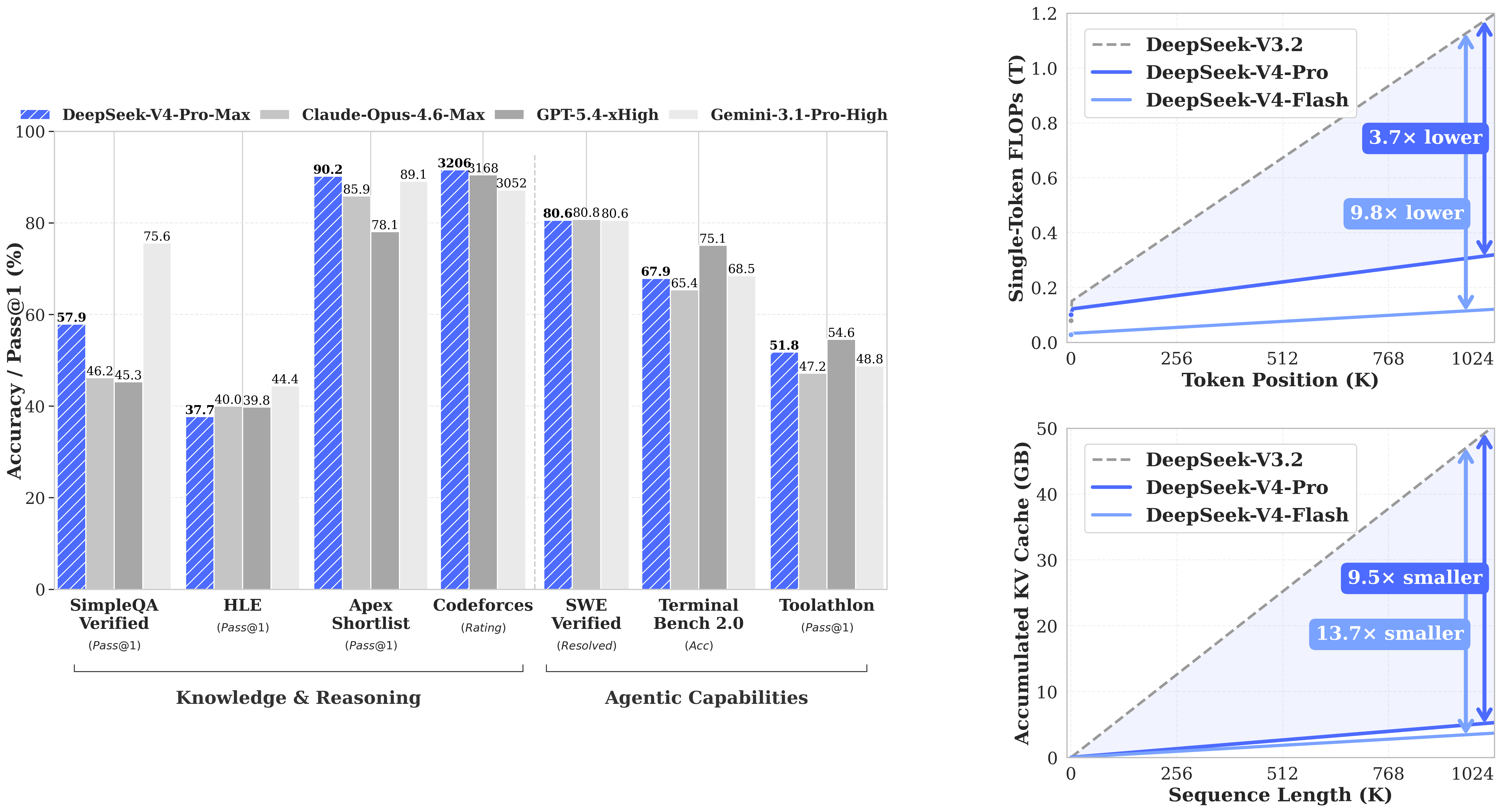

On LiveCodeBench, V4-Pro-Max scores 93.5 Pass@1, the highest coding score of any model evaluated, open-source or not. On SWE-bench Verified, which tests real software engineering tasks end-to-end, it scores 80.6, within a single point of Claude Opus 4.6. These are not toy benchmarks. These are tests of whether a model can actually read a codebase, understand a bug report, and ship a fix.

| Model | LiveCodeBench Pass@1 |

|---|---|

| Opus 4.6 Max | 88.8 |

| Gemini 3.1 Pro High | 91.7 |

| K2.6 Thinking | 89.6 |

| DeepSeek V4-Pro Max | 93.5 (highest) |

The 1 million token context window is the real superpower. It means you can paste an entire codebase into a single API call, not a summary, not chunks, the actual source files, and the model reasons across all of it at once. No retrieval pipeline needed. No stitching results together manually. One call, full picture.

The efficiency behind this is significant too. Despite being a much larger model than its predecessor, V4-Pro requires only 27% of the compute per token compared to V3.2, and a fraction of the memory. The architecture is specifically designed to hold massive context without the cost spiraling. That is what makes the 1 million token window practical, not just theoretical.

DeepSeek also made one agent-specific change that matters enormously. V4 now preserves its reasoning across the full conversation even when tools are being called between messages. In previous versions the model would lose its train of thought every time a new message arrived. For complex multi-step coding tasks, that was a real problem. V4 solves it.

What We Built (Three Demos)

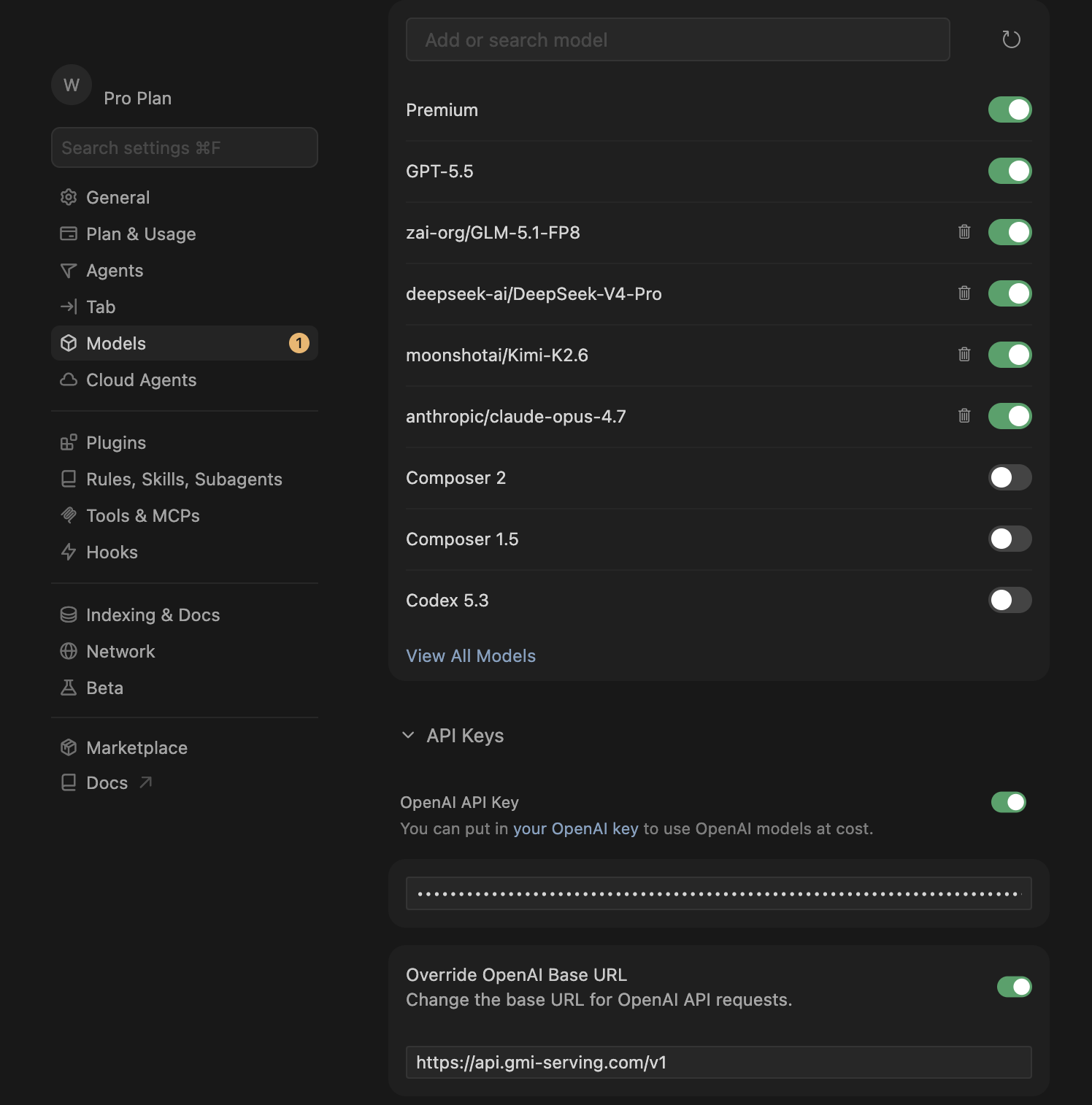

We set up DeepSeek V4 on GMI Cloud using the OpenAI-compatible endpoint at console.gmicloud.ai. Setup took under five minutes. We plugged the GMI API key into Cursor as a custom model using the model ID deepseek-ai/DeepSeek-V4-Pro and got to work.

Demo 1: A simple calculator. Clean, functional, done in seconds. It confirmed the setup worked and the model produced runnable code without hand-holding.

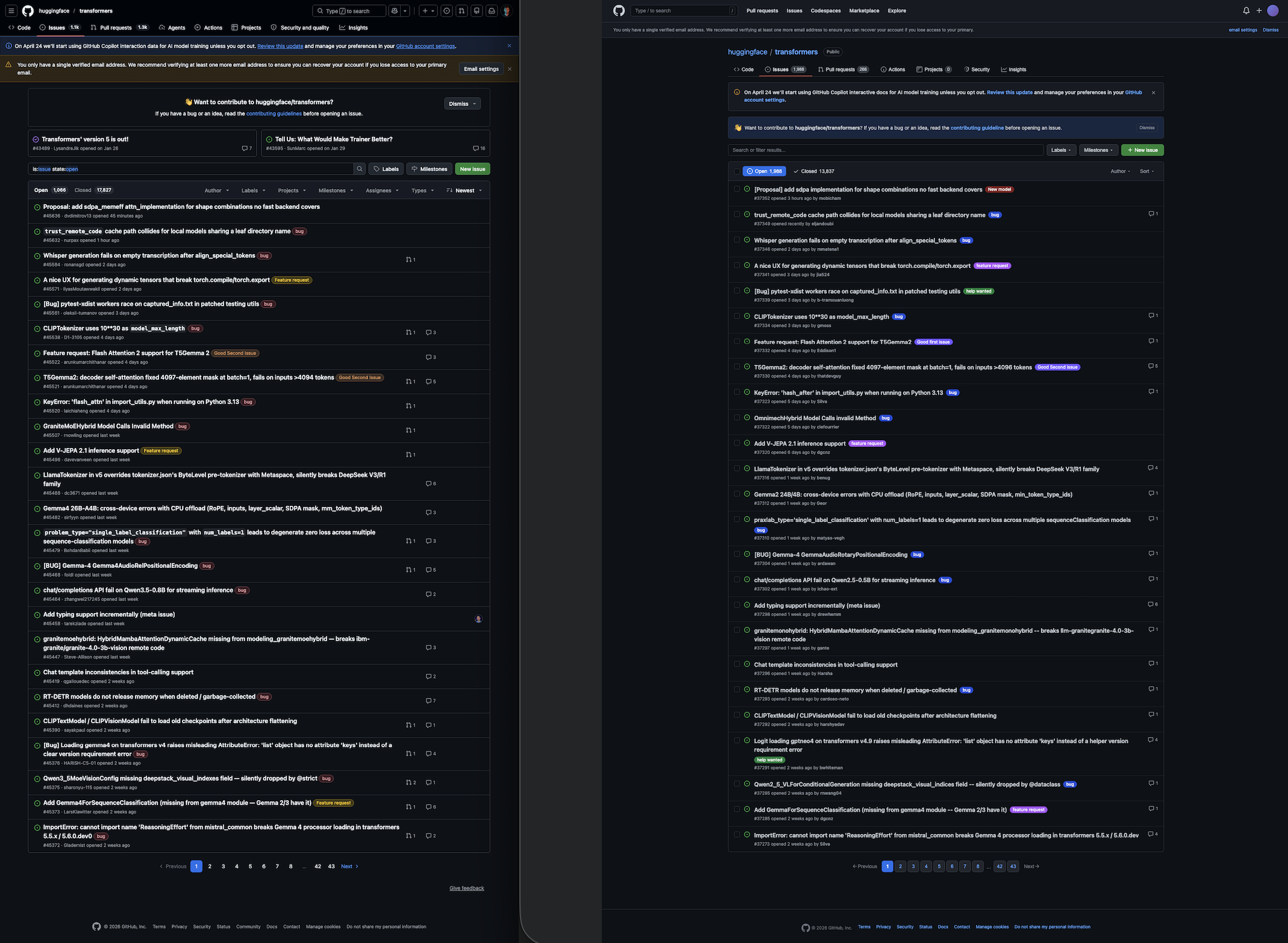

Demo 2: Clone a GitHub Issues page from a URL. We pasted a GitHub Issues board URL and typed one prompt: "Build a functional clone of this UI as a single HTML file with working interactions, matching the visual style exactly." No screenshot. No design file. No spec. V4 read the URL, understood the layout, and produced a working HTML clone that rendered correctly on the first run. Dark sidebar, issue list, status badges, filters, all there.

Demo 3: Reconstruct a Slack channel from a screenshot. We dropped a screenshot of a Slack channel view into the session. V4-Pro rebuilt the sidebar, message feed, member list, and input bar as a working frontend in one pass.

These are not cherry-picked edge cases. They are things any developer with a GMI API key can do right now, today.

The Use Case That Changes How Teams Work

Paste your entire legacy codebase into a single V4-Pro API call and ask it to produce full architecture documentation, identify cross-file bugs, and generate a modern scaffold.

Before V4 this was not possible with any open-source model. V3 had a 128K context window. Even V3.1, which GMI Cloud integrated in August 2025, topped out at 128K. V4's 1 million token window changes that completely. Load entire repositories, not summaries, not chunks, the actual source, and ask V4 to reason across all of it. Dependency impact analysis, cross-module bug detection, onboarding documentation, security audits. All in one call.

How to Get Started on GMI Cloud

GMI Cloud's inference engine runs on NVIDIA H100 and H200 infrastructure. The endpoint is OpenAI-compatible, so Cursor, Continue.dev, Cline, and direct Python API calls all work immediately.

- Sign in at console.gmicloud.ai

- Go to API Key Management, create a key with Inference scope

- In Cursor: Settings, add a custom model, set base URL to

https://api.gmi-serving.com/v1, paste your GMI key, set model todeepseek-ai/DeepSeek-V4-Pro - Start building

V4 is avaliabe now on GMI! Join the Discord at discord.gg/mbYhCJSbF6 to get community support.

Try it now at console.gmicloud.ai

Roan Weigert

Build AI Without Limits

GMI Cloud helps you architect, deploy, optimize, and scale your AI strategies

.png)