We ran the same prompt through Hermes and OpenClaw, both powered by GMI Cloud using DeepSeek. The outputs were completely different, not in quality, but in what each agent left behind. One created a reusable skill. One told us to do it ourselves. Here is what that means for anyone building with agents in 2026.

The Experiment

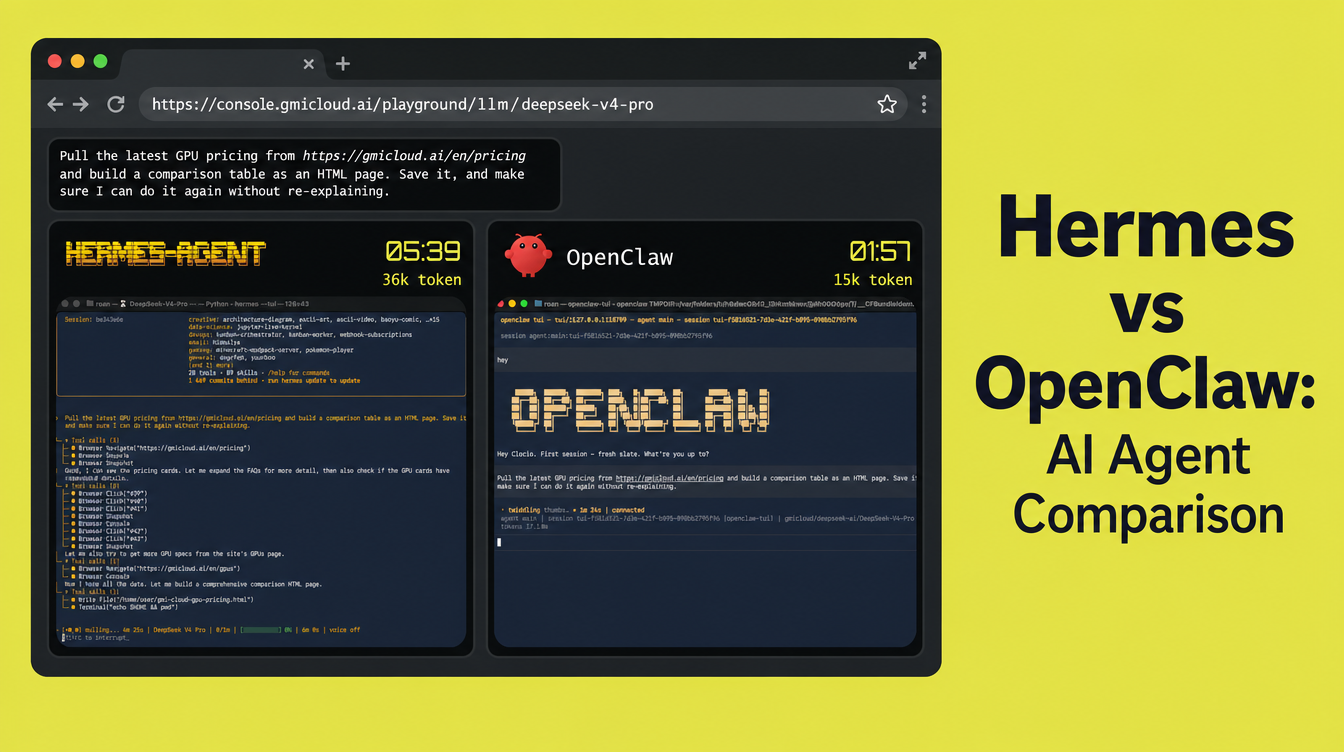

We gave both agents the same prompt:

"Pull the latest GPU pricing from gmicloud.ai/pricing and build a comparison table as an HTML page. Save it."

Both were connected to GMI Cloud using DeepSeek V4 Pro. Both ran in a split terminal, side by side. Neither had prior context.

The task was completed on both sides. But what each agent produced after the task is where the story actually starts.

asked Hermes Agent and OpenClaw to scrape our site and send a report. using DeepSeek V4 from our API.

— GMI Cloud (@gmi_cloud) May 6, 2026

OpenClaw: 15k token, 2min 48 sec. wrote a bash script.

Hermes: 36 token, 8 min 06 sec. wrote a SKILL.md. runs itself next time. pic.twitter.com/iwUhny5N8z

What Hermes Left Behind

Hermes completed the task and then wrote a SKILL.md file to ~/.hermes/skills/gpu-cloud-pricing-comparison.md.

That file is a reusable instruction set the agent wrote for itself:

- When to trigger the skill (exact phrase patterns it listens for)

- How to navigate and scrape the pricing page, including handling Canvas/WebGL rendering

- How to expand collapsed FAQ sections using JavaScript

- The full HTML design spec: dark theme, CSS variables, responsive grid, print styles

- Four specific pitfalls it encountered and how to handle them on the next run

The skill is 4,390 characters of structured knowledge the agent extracted from a single run.

The next time you type anything like "pull GPU pricing from any provider," Hermes loads that skill before it starts. It skips the trial and error. It already knows the hard parts.

Agents with 20 or more self-generated skills complete similar repeat tasks 40% faster than a fresh instance, based on our internal benchmarks.

What OpenClaw Left Behind

OpenClaw completed the task and produced a gmi-cloud-pricing.sh script. The entire file:

Nine lines. It opens a browser tab and points you to the page.

OpenClaw executed the task using its base model knowledge and moved on. The approach, the edge cases, the scraping logic: none of it was carried forward. The next time you run the same prompt, OpenClaw begins fresh (true of a default install, self-learning can be added via ClawHub plugins)

Token Usage: Two Runs, Side by Side

We ran the same request twice on both agents. Here is what the numbers showed:

| OpenClaw | Hermes | |

|---|---|---|

| Run 1 — tokens | 15,000 | 36,000 |

| Run 1 — time | 2:50 | 6:45 |

| Run 2 — tokens | 15,000 | 30,000 |

| Run 2 — time | ~2:50 | Faster |

OpenClaw is consistent. Same tokens, same time, both runs. It executes reliably and predictably every time.

Hermes costs more on run 1: 36,000 tokens and 6 minutes 45 seconds. That is because it is doing more than completing the task. It is extracting patterns, identifying pitfalls, and writing a skill to disk for every future run.

By run 2, Hermes drops to 30,000 tokens. The skill is already loaded. The scraping logic, the edge cases, the design spec: Hermes already knows them. The gap closes, and it keeps closing with every subsequent run.

The 21,000 token delta on run 1 is the cost of building a skill. Everything after that is the return on it.

The Same Prompt. Two Philosophies.

| Hermes | OpenClaw | |

|---|---|---|

| Output | SKILL.md: 4,390 chars of reusable logic | fetch.sh: 386 chars, opens a browser tab |

| Knowledge retained | Scraping logic, design spec, 4 pitfall warnings | Task complete, nothing carried forward |

| Run 1 tokens / time | 36,000 / 6:45 | 15,000 / 2:50 |

| Run 2 tokens | 30,000 (skill loaded) | 15,000 (starts fresh) |

| Over time | Gets faster, uses fewer tokens per run | Same cost every run |

This is a philosophy comparison. On a single run, both agents produce solid output. The difference is what compounds over time.

Hermes is designed around a learning loop called GEPA. Every roughly 15 tool calls, it evaluates what happened and decides if the pattern is worth saving. If yes, it writes a skill to disk: a plain file you can read, edit, and share.

OpenClaw is designed around a skill marketplace. Its power comes from 10,000+ pre-built, human-authored skills on ClawHub that you install before running a task. It is built to execute skills reliably at scale, across multiple channels simultaneously.

When to Reach for Each One

Reach for Hermes when:

- You repeat similar tasks frequently and want them to get faster over time

- You are a solo developer or small team running personal automation

- You want an agent that builds context about your workflows and compounds it

- You want skills you can inspect, version-control, and share with your team

Reach for OpenClaw when:

- You need to orchestrate multiple agents across multiple channels simultaneously

- You want a pre-built skill for a known task, installed in one command

- You are building team or production-facing automation with predictable, deterministic behavior

- You want full visibility and manual control over what your agent knows

How to Run Both on GMI Cloud

Both agents are officially supported on GMI Cloud. Setup takes under 15 minutes each.

Hermes: Full guide here >

OpenClaw: Go to demo >

Try It Yourself

The best way to understand the difference is to run the same prompt on both and look at what each agent leaves behind.

Then watch what appears after the task completes. What your agent does after the task is finished is the real product.

Get started:

Roan Weigert

DevRel AI Engineer

Build AI Without Limits

GMI Cloud helps you architect, deploy, optimize, and scale your AI strategies