Run Your Own AI Agent with Hermes and GMI Cloud

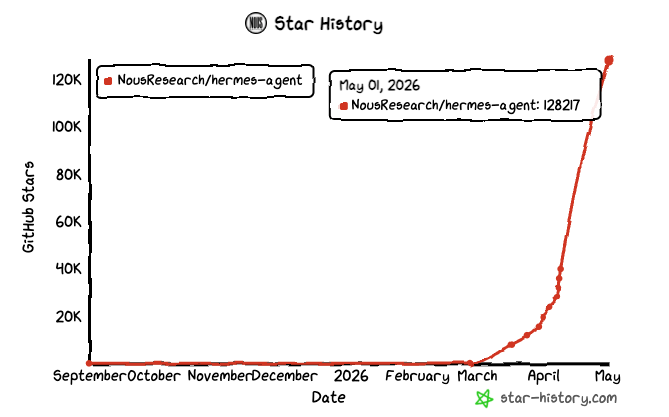

Hermes Agent went from zero to 128,000 GitHub stars in roughly ten weeks. That kind of growth does not happen by accident. It happens because the thing actually works in a way that previous agents did not.

May 01, 2026

Released on February 25, 2026, by Nous Research, Hermes is the first open-source agent with a built-in learning loop. It does not just complete tasks. It studies what it did, packages the knowledge as a reusable skill, and gets faster the next time around. Agents with 20 or more self-generated skills complete similar repeat tasks 40 percent faster than a fresh instance, according to benchmarks published alongside the ICLR 2026 paper that underpins the learning mechanism.

GMI Cloud is now an officially supported provider inside Hermes. That means you can be running your own personal AI agent, backed by production-grade inference, in about ten minutes.

What Makes Hermes Different

Most AI agents are stateless. Every conversation starts from scratch, every task is treated as new, and every pattern you have shown the agent before is forgotten. Hermes breaks that.

The core mechanism is called GEPA, published as an ICLR 2026 Oral paper. Every time Hermes finishes a task, it evaluates what happened and extracts a reusable skill. Skills are stored as plain files on disk that you can read, edit, and share. The more you use the agent, the more skills it accumulates, and the faster and cheaper those repeat tasks become.

The latest release, v0.12.0 from April 30, 2026, ships with 118 bundled skills out of the box and was built by 213 community contributors across 550 merged pull requests in a single release window. That is the release velocity of a well-funded research lab.

Here is a quick overview of what the framework includes today:

| Feature | Details |

|---|---|

| Messaging platforms | Telegram, Discord, Slack, WhatsApp, Signal, Email, and more (18 total) |

| Inference providers | 20 plus, including GMI Cloud, Anthropic, OpenRouter, Gemini, and local models |

| Bundled skills | 118 as of v0.12.0 |

| Execution backends | Local, Docker, SSH, Daytona, Singularity, Modal |

| Memory layers | Three tiers: in-session, persistent, and long-term user modeling |

| License | MIT |

| Minimum hosting cost | Around $5 per month on a basic VPS |

What You Can Actually Do With It

Here are four real use cases that show the range of what Hermes handles well.

Daily briefings on autopilot Tell Hermes to pull the top three AI news stories every morning at 9am, summarize them, and send the result to your Telegram. It sets up the cron job, runs it, and from that point on you get your briefing without touching anything. The skill it learned gets reused the next time you schedule anything similar.

Background research while you work Ask Hermes to monitor a GitHub repository and file an issue when the test suite starts flaking. Because the agent runs on a remote backend VPS, it keeps watching even when your laptop is closed. This is the pattern that separates Hermes from tools like Cursor or Aider, which assume the agent and the person share a terminal.

Multi-channel coordination Start a conversation in your terminal, continue it on Telegram from your phone, then ask Hermes to send the result to your team in Slack. One agent, one memory, multiple channels.

Scheduled reports and data pulls Ask Hermes to pull weekly metrics from an API, format them into a summary, and send them to a channel every Monday morning. Once it learns the pattern, it handles it automatically.

Why Run It on GMI Cloud

Hermes is model-agnostic. You can point it at any provider. The reason to use GMI Cloud comes down to a few things.

Setup is immediate. You get an API key, paste it into the Hermes wizard, and you're running. GMI Cloud gives you access to a wide range of production models, including top-tier LLMs and multimodal options, all through a single API endpoint. And because Hermes adds zero markup, you pay exactly what GMI Cloud charges for the underlying model.

For personal use with budget models, complex tasks typically run around $0.30 each on models like DeepSeek.

How the Learning Loop Works

Every roughly 15 tool calls, Hermes pauses and evaluates the session. It asks itself what patterns emerged, what steps it took, and whether those steps are worth saving. If yes, it writes a skill file to disk. That file includes the context, the approach, and the outcome.

The next time you ask for something similar, Hermes finds the relevant skill before it starts. Instead of figuring out how to schedule a cron job from scratch, it already knows. The task runs faster and uses fewer tokens.

Over time, this compounds. The agent you have after six months of daily use is measurably faster than the one you started with, and you can verify that by reading the skill files sitting in your Hermes directory.

Watch the Full Setup

We recorded the complete setup live, from installation through sending the first real task on Telegram.

The written step-by-step guide is in our documentation: https://docs.gmicloud.ai/set-up-hermes-agent-with-gmi-cloud

Get Started

- Hermes Agent on GitHub: https://github.com/NousResearch/hermes-agent

- Get your GMI Cloud API key: https://console.gmicloud.ai/user-setting/api-keys

- Hermes provider reference: https://hermes-agent.nousresearch.com/docs/integrations/providers

Roan Weigert

DevRel AI Engineer

Build AI Without Limits

GMI Cloud helps you architect, deploy, optimize, and scale your AI strategies