The marketplace for production-ready

Agents

The marketplace for production-ready Agents

Publish, access, and operate workflow-specific Agents, backed by GMI's model access, computing resources, and deployment tooling

170+

Models Available

Global

Data Center Coverage

99.9%

Uptime target

Trusted by teams launching production Agents

+ More coming soon

+ More coming soonNot an agent catalog

A launchpad for Agents

Most teams do not want another directory of demos. They want an AI agent that works, and a clear path to deploy, list, and operate it in productionGMI Agent Marketplace is where workflow-specific Agents get packaged, distributed, and run at scale, with unified model access and computing resources behind them

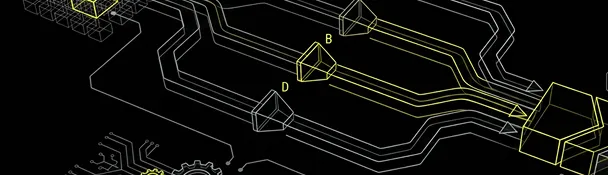

Access or deploy, in one platform

Access

Explore production-ready Agents, compare capabilities and runtime resources, and access the right workflow faster

Deploy

Deploy privately, validate the runtime, then publish to the Marketplace when you're ready

Use GMI your way

Some teams need computing resources. Some need model access through a hosted API. Others need both. GMI Agent Marketplace supports all three adoption paths, whether you're deploying an enterprise AI agent, building a customer-facing agent product, or scaling an internal workflow

Option 01

Compute only

GMI handles deployment, hosting, and runtime operations

You bring your own model layer

Option 02

Models only

GMI provides model access with 170+ supported models

You manage your own runtime environment

Option 03

Compute + Models

GMI handles model access, compute, and operations

One unified system end-to-end

Coming soon

Whether you are packaging a workflow into a customer-facing Agent or scaling an internal deployment, GMI supports modular adoption, not a one-size-fits-all stack

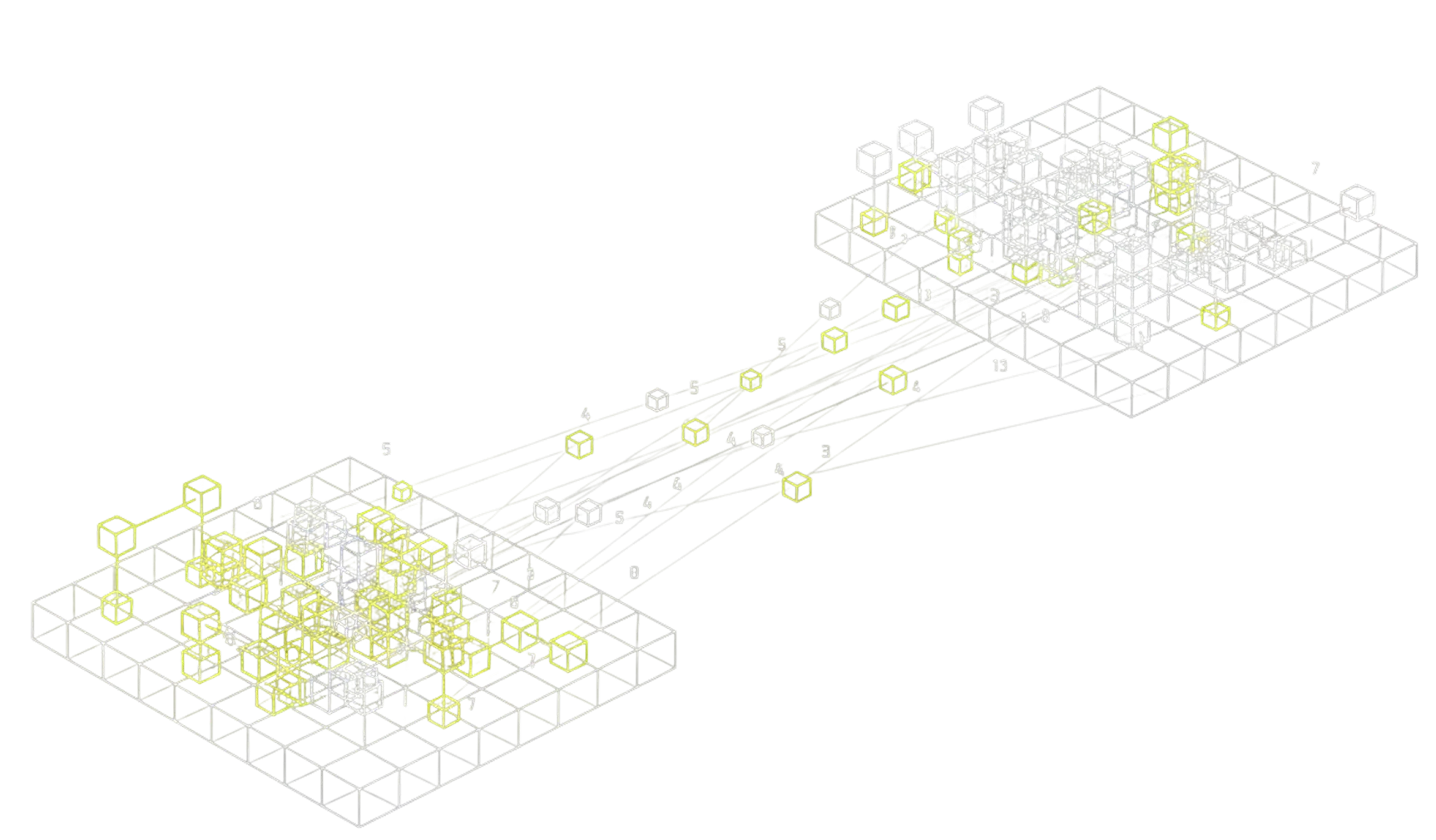

Deploy first. Launch when ready

Deploy your Agent

Start with a private deployment on GMI infrastructure

Connect models & compute

Use GMI Models, GMI Compute, or both together

Validate and publish

Test performance, then create a Marketplace listing linked to your live deployment

Operate after launch

Track usage, logs, spend, and operational metrics once you're live

For builders, GMI shortens the path from working prototype to launchable product, without a separate hosting, model setup, or distribution stack

Everything you need to go from workflow to product

Building an AI agent is the easy part. Getting it deployed, listed, and operating reliably at scale, across models, GPUs, and users, is where most teams slow down. GMI closes that gap with one unified platform instead of five stitched-together tools

Launch faster

From validation to packaging to listing, one path, not three separate projects

Model + infra, unified

Model access and runtime compute in one place. No stack assembly required

Transparent by default

Buyers see pricing, availability, and runtime specs before they commit

Production-grade, not prototype

Deploy, publish, and operate, not just demo. Designed for teams that need to ship

Full visibility after launch

Usage, logs, spend, and performance, all in one dashboard once you're live

One platform. Built for any Agent, any workflow, any team

Client cases

From external service Agents to internal enterprise automation, teams across industries are launching on GMI

Topify

Enterprise AIExternal AgentTopify used GMI's MaaS and container infrastructure to launch an enterprise-ready Agent deployment platform. With access to 100+ models through an OpenAI-compatible API, container hosting, and deployment support from GMI, Topify delivers pre-configured AI assistants to enterprise teams with custom personalities, tool policies, and usage metering

- 2-day launch from setup to deployed control plane, proxy, and admin dashboard

- Significant reduction in setup and configuration time per client

- Dramatically faster deployment vs. custom integrations per customer

NemoClaw

Open SourceEarly AccessAn early-access, open ecosystem Agent launched on GMI with verified marketplace presence, better discoverability, and clearer trust signals for community and enterprise users

- Verified marketplace presence for early-access launch

- Improved discoverability and trust for open ecosystem Agents

- Better packaging for hybrid and community-driven entry points

GMI Cloud Sales Ops Agent

Revenue OpsInternal AgentTriage leads, draft responses, route opportunities, and sync activity to CRM, packaged as a monitored, reusable production Agent instead of a one-off internal script

- 3× faster lead response handling

- 40% higher qualified-meeting conversion

- Centralized post-launch visibility across usage and performance

Knowledge Ops Agent

Knowledge OpsEnterpriseAnswers process questions, summarizes tool updates, and supports follow-up, deployed as a trackable, team-wide production Agent across 800+ internal users

- 82% reduction in manual knowledge lookup time

- ~14 hours saved per team each week on repetitive Q&A

- 1,200+ daily queries served with sub-2s average response

- 3× faster onboarding ramp for new hires

One platform

Not a patchwork of tools

Most teams can build an Agent. Fewer can ship it. GMI connects deployment, model access, discoverability, and visibility in one product

| Capability | Self-Hosted / Stitched Stack | GMI Agent Marketplace |

|---|---|---|

| Deployment + launch path | Manual | Included |

| Model + compute support | Separate setup | Included |

| Resource transparency | Manual | Included |

| Marketplace access layer | Separate system | Included |

| Usage and logs | Separate tools | Included |

| Go-live visibility | Limited | Included |

| Commercialization path | Custom build | Included |

FAQ

Get quick answers to common queries in our FAQs

Your Agent is ready

Now make it launchable

Deploy, list, and operate, with the compute, model access, and visibility to go from workflow to product