Multimodal AI workflows enable creative teams to combine text, images, video and audio generation into structured pipelines that support faster iteration, consistent output and scalable creative production.

Key things to know:

- What multimodal AI workflows are and how they connect text, image, video and audio generation

- Why modern creative work requires systems that coordinate multiple AI models and steps

- How workflows replace isolated tools with connected pipelines that preserve creative intent

- The role of parallel processing and iteration loops in accelerating creative exploration

- Why multimodal workflows improve consistency and reproducibility across creative assets

- How structured pipelines help teams maintain brand alignment and quality standards

- The importance of shared workflows for collaboration between writers, designers and producers

- How cloud-based GPU infrastructure allows creative pipelines to scale from prototypes to large campaigns

Modern creative work rarely happens in a single medium. A campaign might begin as text, evolve into visuals, expand into video, and finish with audio or interactive elements. Creative teams move fluidly between formats, refining ideas as they go. Multimodal AI workflows mirror this reality, enabling creators to combine text, image, video and audio generation into cohesive systems rather than disconnected tools.

Instead of treating generative models as isolated endpoints, creative teams are increasingly building workflows that coordinate multiple models and steps. These workflows don’t just produce outputs; they shape how ideas are explored, refined and delivered at scale. More importantly, they reshape the economics of creative production – addressing the long-standing tradeoff between quick, good and cheap.

This is the design philosophy behind GMI Studio – a visual, workflow-native platform built to orchestrate multimodal generation into production-ready pipelines rather than isolated experiments.

From isolated tools to connected pipelines

Early generative AI tools were designed for single tasks. One tool generated text. Another generated images. A third handled video or audio. While powerful on their own, these tools often forced creators to manually move outputs between systems, introducing friction and inconsistency.

Multimodal workflows change that by connecting these capabilities into a single pipeline. Text generation can feed directly into image creation. Visual outputs can inform video scenes. Audio narration can be synchronized automatically. The workflow defines how modalities interact, ensuring that creative intent carries through every step.

For creative teams, this integration reduces context switching and accelerates iteration. Instead of managing files and prompts manually, they operate inside a system that understands the flow of their work. That system-level orchestration is what enables efficient velocity: faster production without degrading quality, and without compounding labor costs.

Why multimodality demands workflows

Multimodal AI is inherently more complex than single-modal generation. Each modality has different performance characteristics, data formats and latency requirements. Coordinating them requires more than a simple API call.

Consider a typical creative pipeline. A team generates a concept description, uses it to produce images, selects promising visuals, generates short video sequences, and adds audio overlays. Each step depends on the previous one. Running this sequentially on a single system quickly becomes slow and brittle.

Workflows allow these steps to run in parallel where possible, branch into variations, and converge on final results. They make it possible to explore creative space efficiently instead of waiting on long, linear queues.

For image generation in particular, this often achieves all three dimensions of the classic triangle – quick turnaround, consistent quality, and low marginal cost through reuse and batching.

Empowering iteration at creative speed

Iteration is the heart of creative work. The best ideas rarely emerge fully formed; they evolve through exploration and refinement. Multimodal workflows support this by enabling rapid feedback loops.

Instead of generating one image at a time, teams can produce dozens of variations, evaluate them automatically or manually, and refine prompts or parameters based on results. Video and audio workflows benefit similarly, allowing creators to test multiple directions before committing. Video generation may introduce slightly more latency due to rendering and polish, but in business terms the difference is negligible compared to traditional production timelines – while still delivering strong quality at significantly lower cost.

This parallelism dramatically reduces human wait time. When workflows are designed to execute across multiple GPUs, creative teams spend less time waiting and more time deciding. The bottleneck shifts from infrastructure to imagination, and this shift turns AI from a novelty into a strategic asset.

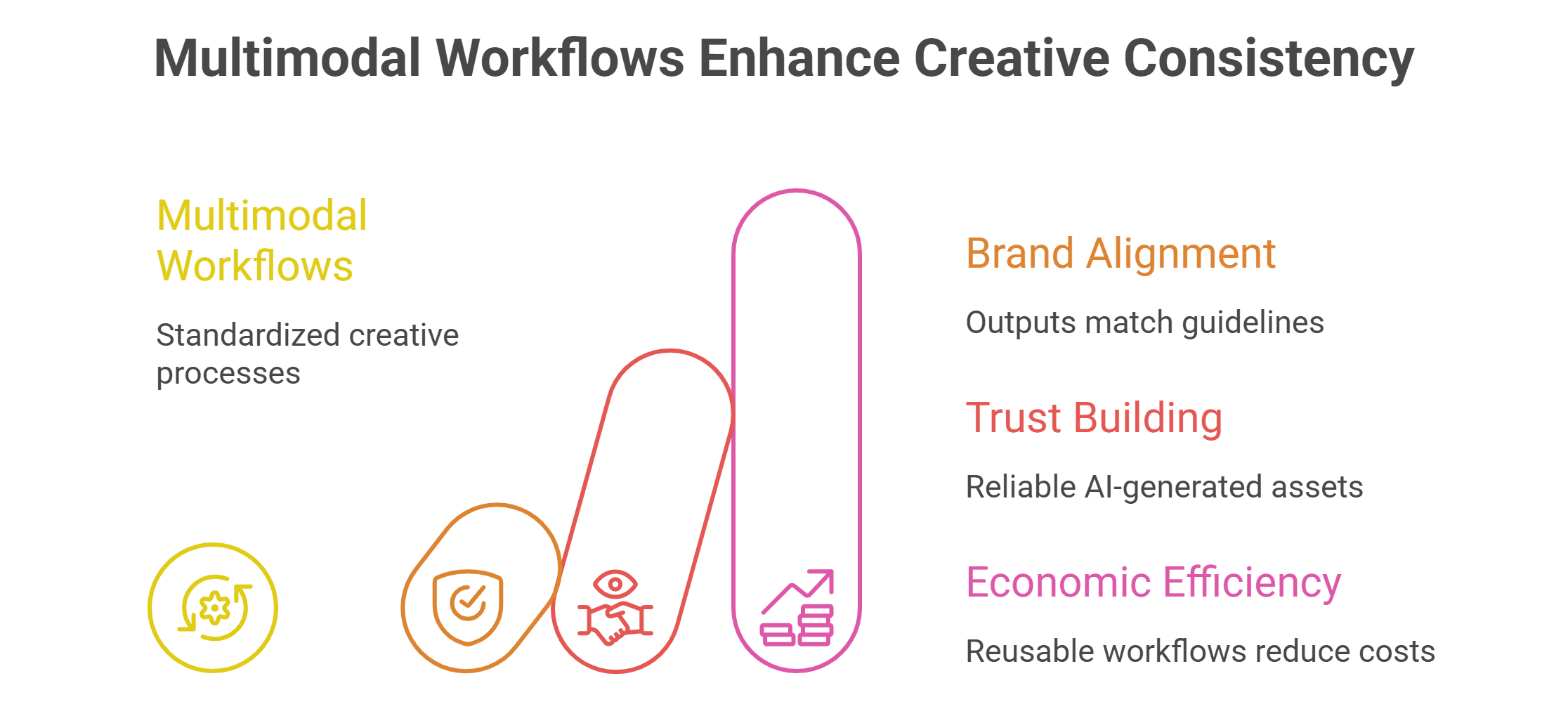

Consistency and reproducibility in creative output

One of the biggest challenges enterprises face with generative AI is consistency. Creative outputs must align with brand guidelines, tone and quality standards. Ad hoc prompting makes this difficult, especially when multiple team members are involved.

Multimodal workflows address this by encoding creative logic into the pipeline itself. The same workflow produces the same class of outputs, even when inputs change. Parameters, models and processing steps are standardized, reducing variance.

This reproducibility builds trust. Creative directors can rely on AI-generated assets knowing they will meet expectations. Teams can revisit and reuse workflows for future projects instead of reinventing processes each time. Reusability also makes AI generation economically compelling: once a workflow is defined, its cost is amortized across every execution, driving down the effective cost per asset over time.

Collaboration across creative teams

Modern creative teams are rarely isolated individuals. Designers, writers, editors and producers collaborate across disciplines. Multimodal workflows provide a shared structure that aligns their efforts.

Instead of passing files and instructions manually, teams share workflows. A writer adjusts text generation logic. A designer tweaks visual parameters. A producer refines video sequencing. Each change updates the system without breaking the overall flow.

This collaborative model mirrors how creative software evolved in other domains. Versioned workflows become living assets that teams improve over time. In this sense, the workflow itself becomes the product – a structured, reusable system that balances speed, quality and cost by design.

Scaling creative production

As creative demands grow, manual processes struggle to keep up. Campaigns expand across platforms. Content must be localized, personalized and refreshed continuously. Multimodal workflows make this scale manageable.

By running workflows on cloud-based GPU infrastructure, teams can scale execution dynamically. A workflow that generates a handful of assets during development can produce thousands during a campaign launch. The underlying system handles concurrency, scheduling and resource allocation.

In platforms like GMI Studio, this orchestration layer is built directly into the workflow environment, allowing teams to scale multimodal pipelines without rebuilding infrastructure or rewriting logic.

This elasticity allows creative teams to respond quickly to demand without long lead times or infrastructure planning. Creativity stays fluid even as output scales.

This is where the quick–good–cheap triangle becomes most visible in business terms: automation preserves quality, parallelism preserves speed, and reproducibility preserves cost efficiency.

Bridging creativity and production

One of the most powerful aspects of multimodal workflows is how they bridge the gap between creative exploration and production deployment. In traditional pipelines, experimental outputs often need to be rebuilt for production environments.

Workflow-native systems eliminate this handoff. The same pipeline used for exploration can be hardened and reused in production. This continuity reduces errors and accelerates time to market.

For enterprises, this means AI-generated creative assets can move directly into operational use. For creators, it means fewer compromises between experimentation and delivery. Platforms like GMI Studio are built around this orchestration model – enabling multimodal pipelines that move seamlessly from ideation to scaled execution within a single visual environment.

Lowering the barrier to advanced creation

Historically, building complex AI workflows required deep engineering expertise. Local setups, GPU management and dependency issues created steep learning curves. This limited adoption among non-technical creators.

Visual, cloud-based workflow platforms lower this barrier. By abstracting infrastructure complexity and presenting workflows as intuitive graphs, they make advanced multimodal creation accessible to a broader audience.

Creators can focus on designing how ideas flow rather than wrestling with systems. The result is more experimentation, more innovation and more expressive outputs.

The future of creative teams

As generative AI continues to evolve, creative teams will increasingly think in terms of systems rather than tools. Multimodal workflows – increasingly built inside platforms like GMI Studio – will become the foundation for how ideas are explored, refined and produced.

Teams that embrace this approach gain speed, consistency and scale without sacrificing creative control. Those that rely on isolated tools risk fragmentation and inefficiency.

Multimodal AI workflows are not just a technical upgrade; they are a new way of working. They align AI with the realities of creative production, empowering teams to move from inspiration to execution with unprecedented efficiency.

In this new landscape, creativity is no longer constrained by format or infrastructure. It flows through pipelines designed to support imagination at scale.

Frequently Asked Questions About How Multimodal AI Workflows Power Modern Creative Teams

What is a multimodal AI workflow in creative production?

A multimodal AI workflow is a structured pipeline that combines multiple types of generative models, such as text, image, video and audio. Instead of using separate tools, the workflow connects these steps so outputs from one stage automatically feed into the next.

Why are multimodal workflows important for modern creative teams?

Creative work often moves between formats like text, visuals, video and sound. Multimodal workflows reflect this process by coordinating different AI models in one system, allowing teams to develop and refine ideas across multiple media without constantly switching tools.

How do multimodal AI workflows improve creative iteration?

They allow teams to generate multiple variations at once, test different directions and refine results quickly. Parallel execution means creators can explore ideas faster and spend more time choosing the best direction rather than waiting for results.

How do multimodal workflows help maintain consistency in AI-generated content?

The workflow defines the structure of how content is produced, including models, parameters and processing steps. Because the same system runs each time, outputs remain aligned with creative guidelines even when different inputs are used.

How do multimodal workflows support collaboration between creative roles?

A shared workflow lets writers, designers and producers contribute to the same pipeline. Each team member can adjust specific parts of the system, such as text generation, visual settings or video sequencing, while the overall process stays intact.

Build AI Without Limits

GMI Cloud helps you architect, deploy, optimize, and scale your AI strategies

FAQ