This article explains how low-latency inference architectures enable real-time speech and audio applications, and why audio workloads require fundamentally different design, scheduling and scaling strategies than text-based AI systems.

What you’ll learn:

- why speech and audio inference is uniquely sensitive to latency

- how streaming inference differs from request-based execution

- how audio context and state management impact memory and concurrency

- how to balance latency and throughput in real-time audio workloads

- why parallel execution reduces end-to-end speech inference delay

- how scheduling priorities protect audio pipelines in mixed workloads

- the role of networking and data movement in real-time audio systems

- how elastic scaling prevents dropped streams during traffic spikes

Speech and audio AI systems operate under some of the tightest latency constraints in modern machine learning. Unlike text-based interfaces, where small delays may be tolerated, audio interactions are continuous and time-bound. Users expect spoken responses to arrive naturally, interruptions to be handled smoothly and audio streams to remain synchronized in real time. In this environment, inference latency directly determines usability.

As speech-driven applications scale – from voice assistants and call-center automation to real-time transcription, translation and audio analysis – low-latency inference becomes a system-wide challenge. Achieving it requires more than fast models. It depends on how inference pipelines are designed, scheduled and scaled across GPU infrastructure.

Why audio inference is uniquely latency-sensitive

Audio workloads differ fundamentally from batch-oriented AI tasks. Speech is temporal. Delays of even tens of milliseconds can disrupt conversational flow, cause overlapping speech or create unnatural pauses. For streaming transcription or real-time translation, latency accumulates continuously as audio frames are processed.

Unlike text prompts, audio inputs arrive as a steady stream of small chunks rather than discrete requests. Inference systems must process these chunks incrementally while preserving context across time. This creates pressure to minimize queueing, avoid batching delays and maintain predictable execution paths.

Low-latency audio inference is therefore less forgiving of architectural inefficiencies than many other AI workloads.

Streaming inference as the default pattern

Most production speech systems rely on streaming inference rather than request-response execution. Audio frames are processed as they arrive, with partial outputs emitted continuously.

Streaming places specific demands on GPU inference systems. Requests are long-lived, often occupying execution slots for extended periods. Traditional batching strategies are harder to apply, as delaying frames to build batches can break real-time guarantees.

Effective architectures treat streaming workloads differently from batch inference. They isolate streaming pipelines, limit contention with background jobs and use micro-batching only where it does not compromise responsiveness.

Managing context and state efficiently

Speech models rely heavily on temporal context. Acoustic features, phoneme history and language model state must be preserved across frames. This stateful behavior increases memory pressure and limits concurrency.

Inference systems must manage context carefully to avoid bloating memory usage. Techniques such as sliding windows, context compression and selective state retention help reduce memory footprint without degrading accuracy.

Poor context management leads to fewer concurrent streams per GPU, forcing teams to scale capacity aggressively and driving up cost.

Latency versus throughput in audio workloads

Audio inference architectures must strike a careful balance between latency and throughput. Over-optimizing for latency leads to underutilized GPUs and high cost per stream. Over-optimizing for throughput introduces jitter, buffering and unacceptable delays.

The optimal balance depends on application requirements. Live voice interfaces prioritize latency above all else. Transcription services may tolerate slightly higher latency to improve efficiency. Audio analytics pipelines may batch aggressively.

Inference systems must support different execution profiles within the same platform, routing workloads based on latency tolerance and real-time requirements.

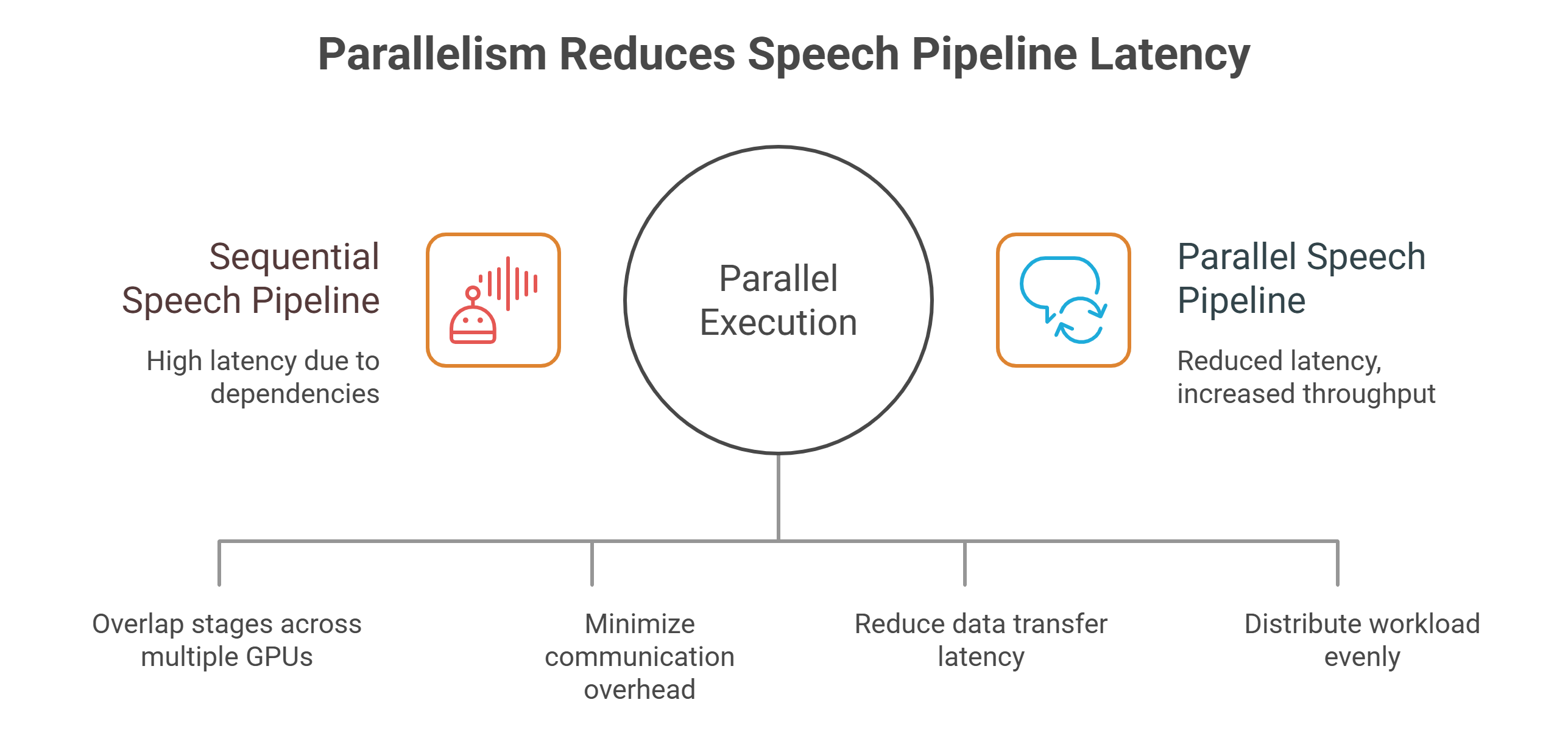

Parallelism in speech pipelines

Many speech systems involve multiple inference stages: acoustic modeling, language modeling, punctuation, diarization or emotion detection. When executed sequentially, these stages compound latency.

Parallel execution reduces end-to-end delay by overlapping stages across GPUs. For example, acoustic decoding can proceed while language model refinement runs in parallel, reducing the critical path.

Parallelism requires careful orchestration and fast interconnects. Without sufficient bandwidth, parallel stages introduce communication overhead that erodes gains.

Scheduling challenges in mixed workloads

Speech inference rarely runs in isolation. In production environments, audio workloads often coexist with text generation, embeddings or background analytics.

Naive scheduling causes contention. Long-lived streaming sessions can block short text requests, while batch jobs can starve real-time audio pipelines.

Effective scheduling prioritizes audio inference explicitly. Streaming workloads receive reserved capacity or higher scheduling priority, ensuring predictable latency even under load. Background workloads are throttled or routed to separate GPU pools.

Networking and data movement considerations

Audio inference pipelines are sensitive to networking delays. Audio frames, intermediate representations and partial outputs must move quickly between components.

High-bandwidth, low-latency networking is essential, particularly in distributed deployments where stages are split across nodes. Slow interconnects introduce jitter and variability that degrades user experience.

Architectures that minimize unnecessary data movement and colocate tightly coupled stages perform best at scale.

Scaling speech inference elastically

Audio traffic is often bursty. Call volumes spike during peak hours. Live events generate sudden demand. Static capacity planning leads to either idle GPUs or dropped streams.

Elastic scaling allows inference capacity to track demand. However, scaling must be fast. Slow GPU provisioning introduces audible glitches and degraded service.

Low-latency audio systems benefit from predictive scaling based on historical patterns and real-time signals such as active stream count and frame backlog.

Observability for real-time audio systems

Optimizing audio inference without observability is nearly impossible. Teams must track end-to-end latency, frame processing time, jitter, dropped frames and GPU utilization.

Traditional metrics such as average latency are insufficient. Tail latency and variance matter more. A system that is fast most of the time but occasionally stalls is unusable for speech.

Detailed telemetry enables teams to identify bottlenecks in decoding, scheduling or data movement and refine architectures iteratively.

Designing for reliability under load

Audio applications amplify failure modes. Dropped frames, stalled streams or delayed responses are immediately noticeable to users.

Inference systems must be resilient. Graceful degradation strategies, such as reducing model complexity under load or temporarily lowering output quality, help maintain service continuity.

These strategies require tight integration between inference logic and infrastructure control.

Building speech inference systems that scale

Low-latency speech inference is a holistic problem. Model optimization alone cannot compensate for poor scheduling, insufficient networking or rigid scaling mechanisms.

Successful systems combine streaming execution, intelligent scheduling, adaptive scaling and detailed observability into a cohesive architecture. They treat audio inference as a first-class workload rather than adapting generic inference pipelines.

As speech and audio interfaces become more central to AI-driven products, low-latency inference infrastructure becomes a strategic asset.

At GMI Cloud we provide inference-optimized GPU infrastructure with intelligent scheduling, high-bandwidth networking and elastic scaling designed to keep audio and speech workloads responsive at production scale.

Frequently Asked Questions About Low-Latency Inference for Speech and Audio Applications

1. Why is low-latency inference so critical for speech and audio applications?

Audio interactions are continuous and time-bound, so even small delays can break the natural flow. When latency creeps in, you get awkward pauses, overlapping speech, or audio streams that feel out of sync.

2. What makes audio inference more latency-sensitive than text-based AI?

Audio arrives as a steady stream of small chunks rather than a single request. Those frames have to be processed incrementally while keeping context over time, so queueing and batching delays show up immediately as jitter or lag.

3. Why is streaming inference the default approach for production speech systems?

Speech systems typically need partial outputs continuously as frames arrive, not a single response at the end. Streaming keeps the experience responsive, but it also creates long-lived requests that need careful scheduling so they don’t block other work.

4. How does context and state management affect speech inference performance?

Speech models depend on temporal context and state across frames, which increases memory pressure and limits how many streams can run at once. If context isn’t managed efficiently, concurrency drops and teams are forced to scale GPU capacity more aggressively.

5. How do speech systems balance latency and throughput without ruining the user experience?

If you push too hard for latency, GPUs can stay underutilized and cost per stream climbs. If you push too hard for throughput, you introduce buffering and delays that users notice right away, so systems often route workloads based on how strict the real-time requirements are.

Build AI Without Limits

GMI Cloud helps you architect, deploy, optimize, and scale your AI strategies