This article explains how inference workloads can be scaled automatically on GMI Cloud, focusing on why inference requires a fundamentally different approach to scaling and how performance, cost and responsiveness can be balanced under volatile, real-world traffic.

From this article, you’ll learn:

- why inference workloads are harder to scale than training jobs

- which scaling signals actually reflect real inference pressure

- how separating latency-sensitive and throughput-oriented traffic improves stability

- how GMI Cloud avoids cold starts while keeping GPU capacity elastic

- why adaptive batching is a key lever for efficient scaling

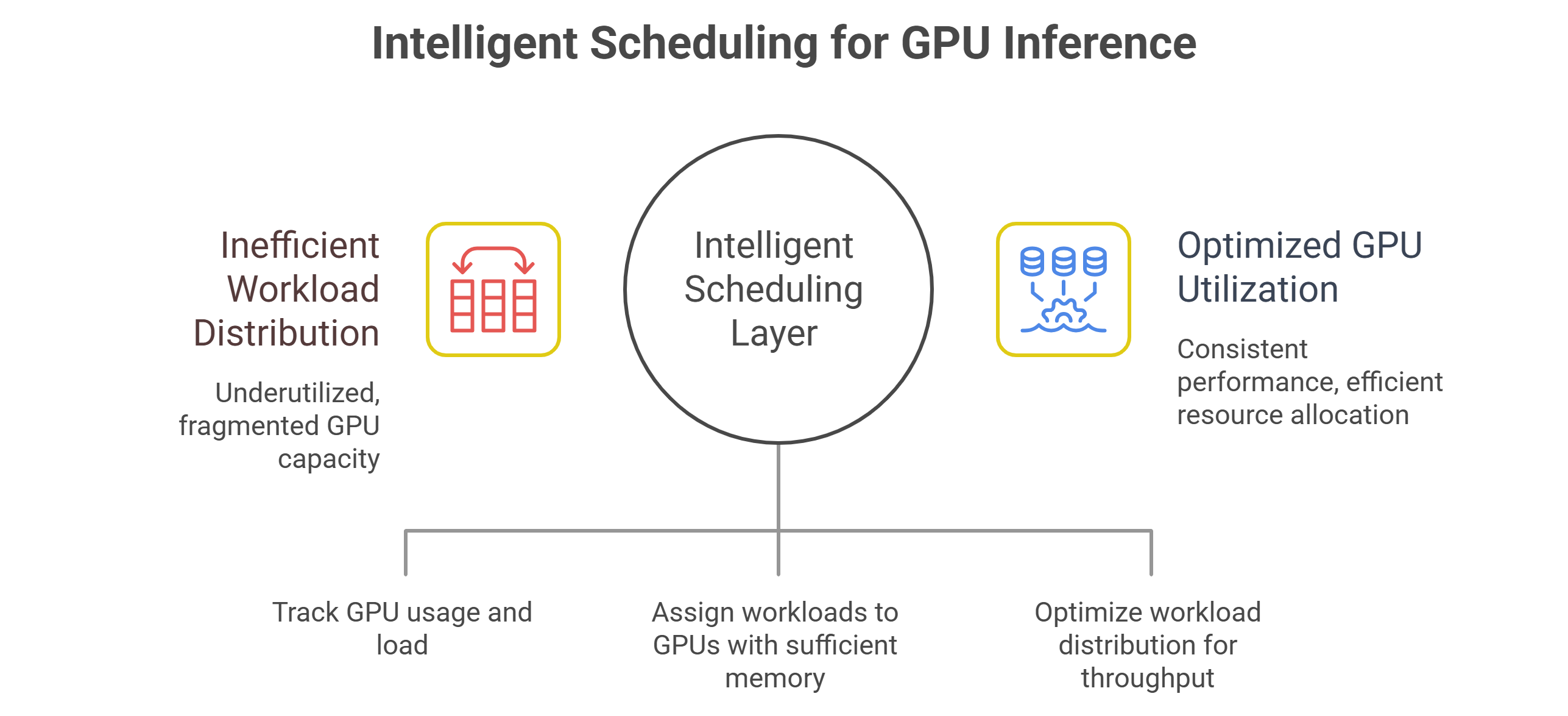

- how intelligent scheduling prevents fragmented and underutilized GPU capacity

- how cost-aware scaling balances reserved and on-demand resources

- why observability is essential for refining autoscaling behavior over time

- how multi-model and multi-tenant inference environments can scale safely

Inference workloads are inherently volatile. Traffic patterns shift by time of day, product launches create sudden spikes, and agentic or multimodal systems can multiply compute demand unpredictably.

Static provisioning struggles in this environment. Overprovisioning wastes budget, while underprovisioning degrades latency and reliability. Automatic scaling is no longer a convenience but a requirement for production AI systems.

Scaling inference effectively is not just about adding more GPUs when traffic increases. It requires coordination across scheduling, batching, routing and cost controls to ensure performance remains stable while utilization stays high.

GMI Cloud approaches inference scaling as a system-level problem rather than a single autoscaling rule.

Why inference scaling is fundamentally different from training

Training workloads are relatively predictable. Jobs are submitted, resources are allocated, and completion time can be estimated with reasonable accuracy. Inference behaves very differently. Requests arrive continuously, often in bursts, and their computational cost varies based on input size, model type and pipeline complexity.

Inference systems must respond in real time. Scaling decisions that are too slow lead to queue buildup and latency spikes. Decisions that are too aggressive inflate costs and create idle capacity. Automatic scaling for inference must therefore operate on tighter feedback loops than traditional compute scaling.

Scaling signals that actually matter

Many platforms attempt to scale inference based on high-level metrics such as CPU usage or instance count. These signals are often too coarse to reflect real inference pressure.

Effective inference scaling relies on signals such as queue depth, request arrival rate, batch saturation, GPU memory pressure and tail latency. These metrics indicate not just how busy the system is, but how close it is to violating performance targets.

On GMI Cloud, scaling decisions are driven by inference-specific telemetry rather than generic infrastructure metrics. This allows capacity to be added or removed in response to real workload behavior instead of reactive guesswork.

Separating latency-sensitive and throughput-oriented workloads

One of the most important scaling strategies is workload separation. Not all inference requests have the same urgency. Some require immediate execution, while others can tolerate short delays in exchange for batching efficiency.

Automatic scaling works best when inference traffic is segmented into execution pools based on latency tolerance and resource requirements. Latency-critical requests scale independently from batch-friendly workloads, preventing one from starving the other.

This separation allows GMI Cloud to scale different parts of the inference pipeline independently, preserving responsiveness without sacrificing utilization.

Elastic GPU allocation without cold-start penalties

Cold starts are a major obstacle to inference scaling. Spinning up GPUs too slowly results in dropped requests or degraded latency during traffic spikes. Spinning them up too early wastes capacity.

GMI Cloud mitigates this by combining fast provisioning with intelligent pre-scaling. Historical traffic patterns, queue trends and pipeline behavior inform when and where GPU capacity should be added. This reduces the window where new workloads wait for resources to become available.

Elastic scaling is further supported by lightweight deployment mechanisms that allow inference workers to initialize quickly without lengthy setup or model loading delays.

Adaptive batching as a scaling lever

Batching is often treated as a static configuration, but it plays a central role in scaling inference efficiently. As traffic increases, batch sizes should grow to improve throughput. As traffic subsides, batch sizes should shrink to preserve latency.

Automatic scaling on GMI Cloud integrates batching behavior into scaling decisions. Instead of adding GPUs immediately, the system first increases batching efficiency where possible. Only when batching reaches its practical limits does it expand GPU capacity.

This approach reduces unnecessary scaling events and keeps cost-per-query stable under fluctuating load.

Intelligent scheduling across GPU pools

Scaling inference is not just about adding GPUs – it is about assigning work to the right GPUs. Different models and request types place different demands on memory, compute and execution time.

GMI Cloud’s scheduling layer routes workloads based on real-time availability, memory headroom and execution characteristics. This prevents scenarios where new GPUs are added but remain underutilized because workloads are poorly distributed.

By scaling and scheduling together, the platform avoids fragmented capacity and uneven performance across the cluster.

Cost-aware scaling decisions

Automatic scaling must consider cost alongside performance. Scaling too aggressively can deliver excellent latency while silently inflating operating expenses. Scaling too conservatively protects budget but risks performance degradation.

GMI Cloud incorporates cost-awareness into scaling decisions by balancing reserved and on-demand GPU capacity. Baseline demand is served by reserved resources, while burst traffic is handled by elastic capacity that scales up and down as needed.

This hybrid approach allows teams to maintain predictable costs while still responding instantly to demand spikes.

Observability-driven refinement

No autoscaling system is perfect out of the box. Traffic patterns evolve, models change and pipelines grow more complex. Continuous refinement depends on visibility.

GMI Cloud provides detailed observability into scaling behavior, allowing teams to see how GPU utilization, queue depth and latency respond to scaling events. This feedback loop enables tuning of scaling thresholds, batching strategies and routing policies over time.

Automatic scaling becomes more accurate as the system learns from real usage rather than static assumptions.

Supporting multi-model and multi-tenant environments

Inference scaling becomes more challenging in environments where multiple models and teams share infrastructure. A single model surge should not starve other workloads or trigger unnecessary scaling across the entire cluster.

GMI Cloud isolates scaling domains by model and workload class, ensuring that scaling decisions are localized. This prevents cascading effects and preserves fairness across tenants while still allowing each workload to scale independently.

Scaling as a continuous control loop

In mature inference systems, scaling is not a binary event. It is a continuous control loop that adjusts capacity, batching and scheduling in response to real-time conditions.

GMI Cloud treats scaling as an integrated part of the inference control plane rather than a bolt-on feature. By coordinating telemetry, scheduling and capacity management, it enables inference workloads to grow and shrink smoothly without operational intervention.

As inference becomes the dominant driver of AI infrastructure cost and performance, automatic scaling is no longer optional. Platforms that can scale intelligently, quickly and cost-effectively will define how production AI systems operate at scale.

GMI Cloud enables this by combining inference-aware telemetry, intelligent scheduling and elastic GPU infrastructure into a unified system designed specifically for production inference.

Frequently Asked Questions About Automatically Scaling Inference Workloads on GMI Cloud

1. Why do inference workloads need automatic scaling in production?

Inference demand can swing sharply with time of day, launches, or changes in how requests are processed, and agentic or multimodal flows can multiply compute needs without warning. Automatic scaling helps avoid the two common failure modes of static provisioning: paying for idle GPUs or letting queues build until latency and reliability suffer.

2. Why is scaling inference different from scaling training?

Training jobs are typically scheduled in batches with more predictable runtimes and resource needs, while inference arrives continuously and often in bursts with per-request cost that varies by input size, model type, and pipeline complexity. Because inference is user-facing and real time, scaling decisions have to react on much tighter feedback loops to prevent queue buildup and tail-latency spikes.

3. Which signals matter most for autoscaling inference on GMI Cloud?

High-level metrics like CPU usage can miss what’s actually happening in an inference pipeline. The signals that reflect real pressure are queue depth, request arrival rate, batch saturation, GPU memory pressure, and tail latency, because they show how close the system is to breaking performance targets.

4. How does separating latency-sensitive and throughput-oriented workloads improve scaling?

Not every request needs the same urgency, so putting everything in one pool can cause background work to block interactive traffic or force inefficient micro-batches. By segmenting requests into execution pools based on latency tolerance and resource needs, capacity can scale independently for fast-path traffic and batch-friendly workloads, keeping responsiveness without sacrificing utilization.

5. How does GMI Cloud reduce cold-start problems during traffic spikes?

Cold starts hurt when GPUs come online too slowly, creating delays or dropped requests, and they waste money when capacity arrives too early. The approach described combines fast provisioning with intelligent pre-scaling driven by historical patterns, queue trends, and pipeline behavior, and it also relies on lightweight deployment so workers can initialize quickly without long setup or model-loading delays.

Build AI Without Limits

GMI Cloud helps you architect, deploy, optimize, and scale your AI strategies

FAQ