AI generative workflows transform isolated prompts into structured systems that generate, refine and scale creative outputs across text, images, video and audio.

Key things to know:

- What AI generative workflows are and how they differ from single prompt interactions

- Why prompts alone struggle to scale in real creative and production environments

- How workflows structure generation, evaluation, transformation and routing into repeatable pipelines

- The role of automation, iteration loops and parallel processing in improving speed and efficiency

- Why workflows make generative AI economically viable for teams and businesses

- How multimodal workflows coordinate text, image, video and audio generation within one pipeline

- The importance of reproducibility, collaboration and versioning when deploying AI systems in production

- How visual workflow tools make complex AI pipelines accessible to creators and teams

Most people encounter generative AI through prompts – you type an instruction, press enter, and get an output. That interaction is powerful, but it only captures a small fraction of how generative AI is actually used once it moves beyond experimentation.

In real creative and production environments, a single prompt is rarely enough. Outputs need to be refined, combined, evaluated, routed and repeated at scale. This is where AI generative workflows come in. They transform isolated model calls into structured pipelines that can reliably produce images, video, audio and text across many iterations.

Understanding the shift from prompts to workflows is essential for anyone building with generative AI today. More importantly, it is essential for anyone thinking about business impact.

Creative teams and enterprises all need efficient velocity. Historically, there has been a tradeoff triangle – work can be quick, good or cheap, but you have to pick two. Let’s explore why AI generative workflows fundamentally change that equation.

From single prompts to systems

A prompt is a request. A workflow is a system.

When creators first experiment with generative models, the focus is on finding the right wording to elicit a good response. That works for exploration, but it breaks down quickly in real use cases. The moment you need consistency, scale or collaboration, prompts alone become fragile.

Generative workflows address this by defining a sequence of steps rather than a single interaction. Instead of “generate once and hope”, workflows formalize how outputs are produced, refined and reused. They encode intent into structure.

A simple example might involve generating text, evaluating it against a set of criteria, and regenerating until the output meets a threshold. More complex workflows might branch into multiple variations, feed outputs into image or video generators, or combine several models into a single pipeline.

The key idea is that the logic of creation lives outside the prompt. And once logic lives outside the prompt, velocity becomes measurable and optimizable. Instead of relying on human trial and error, workflows automate refinement loops, parallel exploration and validation. This is where “quick” becomes systematically accelerated, not rushed.

Why prompts stop scaling

Prompts are inherently stateless. Each request starts from scratch, with no awareness of prior context unless it is manually injected. As workflows grow more complex, this leads to repetition, inconsistency and wasted effort.

Creators often find themselves copying and pasting outputs between tools, manually adjusting parameters, or rerunning generations because one step failed. Teams struggle to reproduce results because the “process” exists only in someone’s prompt history.

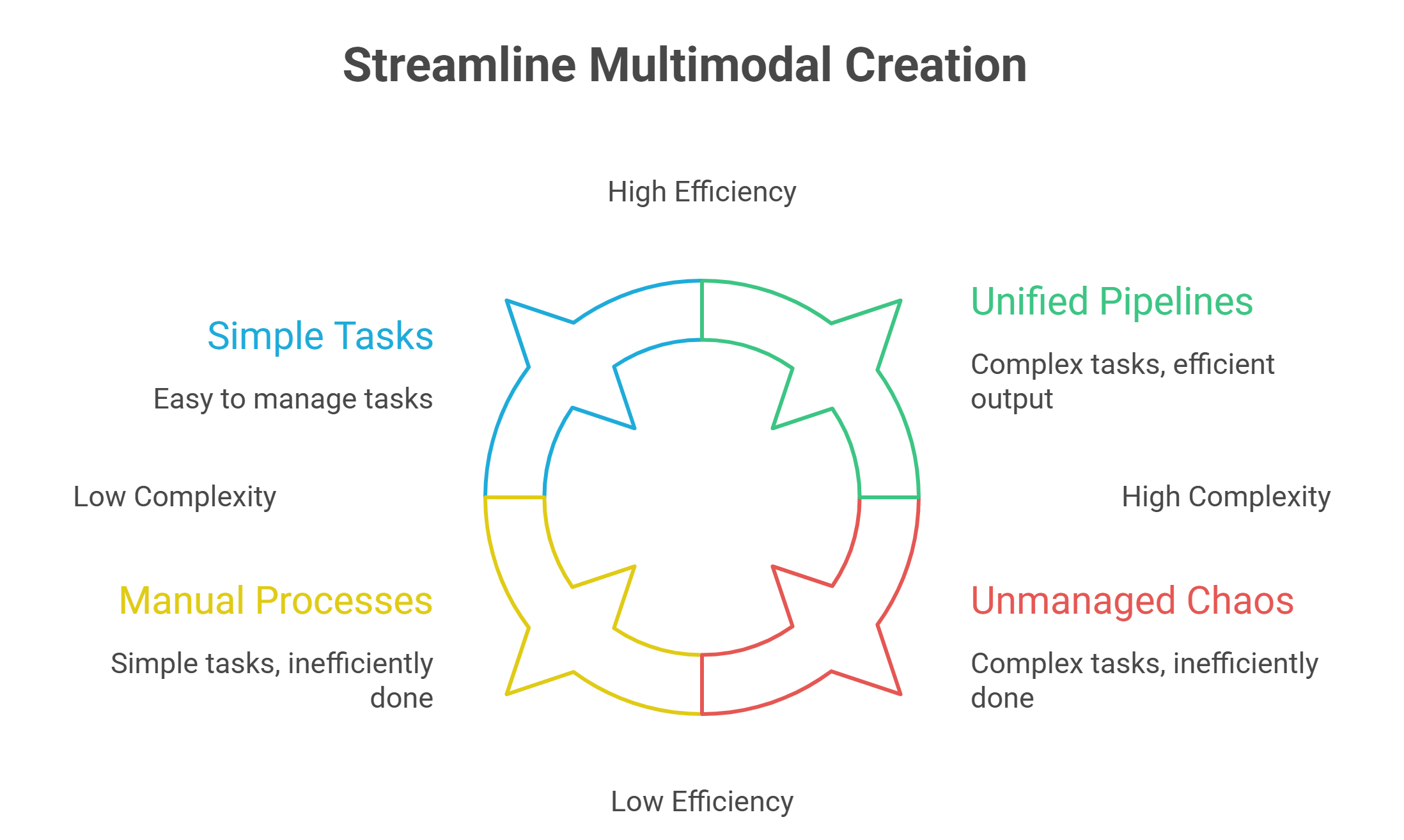

This fragility becomes especially visible in multimodal creation. Image, video and audio generation rarely happen in isolation. They depend on text descriptions, metadata, timing and post-processing. Managing all of that through prompts alone quickly becomes unmanageable.

Generative workflows solve this by externalizing state, control flow and orchestration.

Without workflows, the “cheap” part of the triangle collapses. Manual handoffs, repeated generations and inconsistent outputs inflate hidden labor costs. Workflows compress those costs by making processes reusable and reproducible. The same structure can run thousands of times without reinventing the logic. That is what makes AI generation economically viable at scale.

What defines an AI generative workflow

At a high level, an AI generative workflow is a structured pipeline that coordinates multiple model invocations and processing steps to produce an outcome. It defines not just what to generate, but how generation happens.

Most workflows include some combination of generation, evaluation, transformation and routing. Outputs from one step become inputs to the next. Conditions determine whether to branch, retry or stop.

Importantly, workflows are reusable. Once defined, they can be run repeatedly with different inputs while preserving the same structure. This is what enables reproducibility.

Workflows also make parallelism possible. Instead of waiting for one generation to finish before starting the next, multiple paths can execute simultaneously. This dramatically reduces iteration time, especially for creative exploration.

For image generation, this often means achieving quick, good and cheap simultaneously: multiple variations rendered in parallel, evaluated automatically, and refined without manual intervention. For video generation, workflows typically optimize for good and cheap – slightly longer execution due to polish and post-processing, but negligible in business terms compared to traditional production cycles. The result is consistent, efficient velocity aligned with real business goals.

From experimentation to production pipelines

The difference between a workflow and a production pipeline is not complexity – it is reliability.

Production pipelines must behave predictably. They need to produce consistent outputs, handle failures gracefully, and scale under load. They also need observability, so teams can understand what happened when something goes wrong.

This is where infrastructure matters. Local setups and ad hoc scripts often work during experimentation but fail under real-world conditions. GPU limits, environment inconsistencies, and manual orchestration introduce friction just when speed matters most.

Production-grade AI workflows require execution environments designed for concurrency, parallelism and scale. Without that foundation, workflows remain theoretical.

This is precisely where platforms like GMI Studio become critical. GMI Studio is built around orchestrated, visual generative workflows designed for production, not isolated prompts. It combines model access, orchestration logic and scalable GPU execution so that teams can actually achieve the quick–good–cheap balance in practice, not just in theory.

Multimodal creation makes workflows essential

Multimodal generative AI – combining text, image, video and audio – makes workflows unavoidable.

A single image generation often depends on structured text prompts. Video generation may require scene descriptions, timing metadata and post-processing. Audio workflows involve transcription, synthesis and alignment. When these modalities interact, coordination becomes the central challenge.

Workflows provide a way to manage this complexity. They allow creators to define how modalities interact, how data flows between them, and how outputs are validated before moving forward.

Instead of juggling separate tools, creators work within a unified pipeline that reflects the logic of their creative process.

This orchestration layer is what turns generative AI from a novelty into a production engine. When text feeds image, image feeds video, and video integrates audio in a structured loop, the workflow ensures quality consistency while keeping production fast and cost-controlled. That is the business leverage of multimodal AI.

Visual workflows and creator accessibility

One of the biggest barriers to adopting workflows has historically been accessibility. Traditional workflow systems require code, configuration and orchestration knowledge. That puts them out of reach for many creators.

Visual, node-based workflows change this dynamic. By representing pipelines as graphs, they make structure explicit and editable. Creators can see how steps connect, where data flows, and how changes propagate.

This approach mirrors the evolution of other creative tools. Video editing moved from linear timelines to non-linear editors. Audio production adopted visual mixers and signal chains. Generative AI is following the same path.

Visual workflows are not just easier to use – they encourage better system design. They also directly support velocity: when creators can visually adjust pipelines instead of rewriting scripts, iteration accelerates. Fewer bottlenecks mean faster turnaround, which means competitive advantage. In business terms, that is “quick” without sacrificing “good”.

Reproducibility and trust

One of the biggest challenges enterprises face with generative AI is trust. If outputs vary unpredictably, it becomes difficult to use AI in production contexts like marketing, media or customer-facing applications.

Workflows address this by making generation deterministic at the system level. Even when models introduce randomness, the surrounding pipeline ensures that the same process is followed every time.

This reproducibility is critical for collaboration, and it’s also what makes AI generation “cheap” in the long term. Teams can share workflows instead of prompt snippets. Changes can be reviewed, versioned and tested. The workflow becomes a shared asset rather than tribal knowledge.

Why infrastructure matters for workflows

Workflows are only as effective as the infrastructure that runs them. Sequential execution on a single GPU quickly becomes a bottleneck. Parallel workflows require scheduling, resource isolation and high-throughput execution.

Inference-heavy pipelines, agentic loops and multimodal generation all benefit from distributed execution. Without it, iteration slows and costs rise.

This is why workflow-native platforms are emerging. They combine visual orchestration with GPU infrastructure designed to execute pipelines efficiently. Creators do not need to manage GPUs directly, but they benefit from concurrency, batching and intelligent scheduling behind the scenes.

The goal is to make infrastructure invisible while preserving performance. In practice, this invisible infrastructure is what enables the quick–good–cheap triangle to hold. Speed comes from parallelism and orchestration. Quality comes from structured refinement and model specialization. Cost efficiency comes from reuse, batching and reproducibility. Remove infrastructure, and the triangle collapses again.

From prompts to products

The most important shift enabled by generative workflows is psychological. AI stops being a novelty and starts being a system.

Instead of asking “What prompt should I try next?” creators ask “What pipeline produces the result I want?” That mindset unlocks scale. It enables teams to build products, not just outputs.

Whether the goal is generating marketing assets, producing video content, synthesizing audio, or powering interactive experiences, workflows provide the structure needed to move from experimentation to production.

Platforms like GMI Studio exist precisely to support this transition – giving creators and enterprises a visual, orchestrated environment where AI generation becomes systematic, scalable and economically aligned with business objectives.

The future of generative AI creation

As generative models continue to improve, the differentiator will not be access – it will be execution. Everyone will have models. Not everyone will have workflows that turn those models into reliable creative systems.

AI generative workflows represent the bridge between raw capability and real-world impact. They allow creators and enterprises to harness generative AI without being constrained by prompts, manual processes or fragile setups.

The future of generative AI belongs to those who treat creation as a pipeline, not a prompt.

generative workflows? From prompts to production pipelines

Frequently Asked Questions About AI Generative Workflows: From Prompts to Production Pipelines

What is the difference between a prompt and an AI generative workflow?

A prompt is a single request made to a model, while a workflow is a structured system made up of multiple steps. A workflow can generate, evaluate, refine, route, and reuse outputs in a repeatable way.

Why do prompts stop working well at scale?

Prompts become difficult to manage when teams need consistency, collaboration, and volume. They are fragile because each request starts fresh, which leads to repeated work, inconsistent results, and manual handoffs between tools.

What defines an AI generative workflow in practice?

An AI generative workflow is a reusable pipeline that coordinates multiple model calls and processing steps. It usually includes some mix of generation, transformation, evaluation, and decision-making so outputs can move through a defined process instead of relying on trial and error.

Why are workflows so important for multimodal AI creation?

When text, image, video, and audio all interact, the process becomes too complex to manage through prompts alone. Workflows make it possible to coordinate how each modality connects, how data moves between steps, and how outputs are checked before continuing.

How do visual workflows help creators and teams?

Visual workflows make the structure of a pipeline easier to understand and edit. Instead of relying only on code or scattered prompt history, creators can see how steps connect, adjust the process more easily, and speed up iteration.

Build AI Without Limits

GMI Cloud helps you architect, deploy, optimize, and scale your AI strategies

FAQ