Why Model-as-a-Service is the smartest way to build for AI in 2026

April 07, 2026

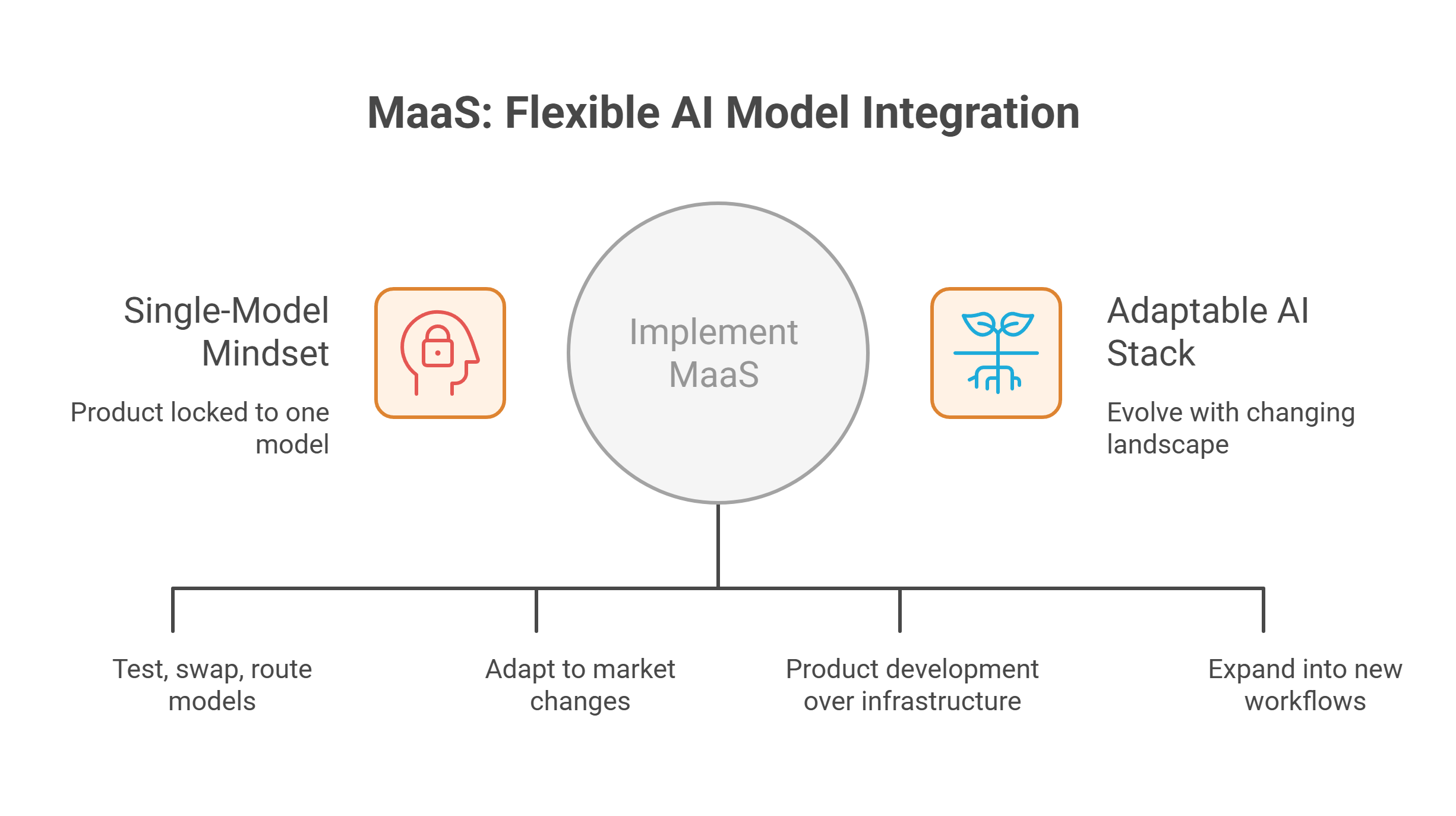

Model-as-a-Service transforms AI architecture by replacing rigid, single-model dependencies with flexible, service-based access to multiple models, enabling teams to adapt quickly as technology, costs, and use cases evolve.

Key things to know:

- Why building around a single AI model creates long-term limitations and lock-in risks

- How Model-as-a-Service introduces flexibility by decoupling products from specific models

- What model liquidity means and why it is critical for adapting to rapid AI changes

- How MaaS allows teams to switch, test, and combine models without rebuilding their stack

- Why flexibility at the model layer improves both technical and business decision-making

- How MaaS helps teams respond to changes in pricing, performance, and capabilities

- Why small and medium-sized teams benefit from MaaS without managing complex infrastructure

- How MaaS supports multimodal expansion across text, image, video, and audio workflows

- Why modular model access enables faster product iteration and lower risk

- How MaaS shifts focus from infrastructure complexity to product development and execution

- Why adaptable architectures outperform rigid systems in a fast-moving AI landscape

- How MaaS aligns with the need for scalable, future-proof AI product design

One of the most limiting things a team can do in AI is architect too much around one model too early. It often starts innocently enough. A team chooses the model that seems strongest at the time, builds prompts and product logic around it, and gradually turns that choice into a dependency. In the short term, that can feel efficient, but in the longer term, it often creates friction. Models improve too quickly, pricing changes too often, and use cases expand too fast for that kind of rigidity to remain useful for long.

That is why Model-as-a-Service is such a smart way to build for AI in 2026. MaaS changes the logic of the stack. Instead of designing the product around one fixed model, it gives teams a way to access the models they need through a service layer. That means the architecture can stay more flexible even as models, costs and capabilities change. The real value is not just model access, but the ability to build without locking the whole product into one narrow path too early.

This matters because the AI layer of a product is now much more dynamic than it was even a year or two ago. Teams are constantly balancing quality, speed, cost, context and modality support. One model may be strong for one task and a weak fit for another. A model that works well today may no longer be the best option in a few months. A stack built around flexibility is simply better suited to that reality.

The single-model mindset creates avoidable limits

A lot of AI teams still treat model choice as the most important architectural decision. That mindset made more sense when the market was smaller and more stable. It makes less sense in 2026. The pace of change is too fast, and the number of viable closed and open-source models keeps growing.

The problem is not only that better models appear. It is that products built too tightly around one model become harder to adapt when they do. Prompts, workflows, performance expectations and even user-facing features begin to depend on a single provider or model family. Once that happens, switching becomes problematic. Teams start protecting old choices instead of improving the product.

That is why MaaS matters. It gives teams a more resilient way to build. Instead of assuming one model will remain the best foundation, it assumes that the model layer should stay flexible. That is a smarter long-term strategy because it reflects how AI actually works now: fast-moving, competitive and constantly changing.

MaaS makes model liquidity possible

The most important concept here is model liquidity. In simple terms, model liquidity means your product is not trapped by one model decision. You can test alternatives, swap models, route different tasks to different options, and respond to market changes without rebuilding the architecture every time.

That flexibility is not just technical. It has direct business value. If a better model appears, the team can evaluate it more easily. If pricing changes make another option more efficient, the stack can adapt. If a product expands into new workflows or new media types, the team has more room to choose what fits rather than forcing every use case through one model.

This is exactly why MaaS is such a useful foundation. It makes model liquidity practical instead of theoretical. GMI Cloud MaaS is built around this idea. Rather than forcing teams to choose one path and stay there, it gives them access to top open and closed models through a single service approach. That means teams can build with more freedom and less lock-in from the beginning.

It is a better fit for small and medium-sized teams

Another reason MaaS is the smart choice is that it fits the reality of how most teams actually build. Not every company wants to manage a complex inference stack from day one. Small and medium-sized teams often need something simpler: reliable model access, strong performance and the ability to move quickly without turning infrastructure into the whole project.

That is where MaaS becomes especially practical. Instead of spending time overcommitting to one model and building too much around it, teams can focus on product development, workflows and user experience. The model layer stays flexible, while the team stays focused on shipping.

The GMI Cloud MaaS platform is designed to give teams access to leading models in a way that is performant, efficient and usable for most small to medium-sized teams. That matters because for many businesses, the smarter move is not to customize everything immediately. It is to keep the AI layer flexible enough to grow with the product.

It supports how AI products are expanding

AI products are also becoming more complex in what they need from models. Many teams are no longer building around text alone. They are moving into image, video, audio and multimodal workflows. In that environment, a stack designed around one model becomes even more restrictive.

A product may start with one simple use case and then expand into several others. A team might need one model for text generation, another for reasoning, and another for visual or multimodal work. That does not mean the product lacks focus. It means the product is growing in the direction the market is already moving.

MaaS supports that kind of growth because it keeps the model layer modular. Teams do not need to rebuild the whole stack every time a new capability is added. They can continue pulling the models they need through the same service logic. That makes expansion easier, faster and less risky.

The smartest AI stack stays adaptable

At a deeper level, MaaS reflects a more mature way of thinking about AI products. The goal is not to pick one model and hope it remains the right answer. The goal is to build a system that can evolve as the landscape changes. That means keeping the model layer flexible while still making it easy to ship.

This is why Model-as-a-Service is more than a convenience. It is a smart architectural choice. It gives teams access to strong models while reducing the risk of early lock-in. It supports experimentation without bottlenecks and production without unnecessary rigidity.

GMI Cloud MaaS is built around access to top open and closed-source models, engineered for performance and efficiency, and designed for teams that want flexibility without having to overarchitect from the start. That is exactly why MaaS is such a strong fit for 2026.

Conclusion

Model-as-a-Service is a smarter way to build for AI in 2026 because it helps teams avoid getting locked into one model too early. As model quality, pricing and use cases keep changing, rigid architecture becomes a problem fast.

MaaS keeps the model layer flexible, supports model liquidity, and makes it easier to adapt over time. For GMI Cloud, that means giving teams access to leading models through a performant, efficient service layer that works especially well for small and medium-sized teams that need flexibility without overcomplicating their stack.

Build AI Without Limits

GMI Cloud helps you architect, deploy, optimize, and scale your AI strategies

FAQ