San Jose GTC 2026 Wrap-Up

What this year’s biggest announcements mean at San Jose GTC 2026

March 24, 2026

GTC 2026 was a landmark week for the AI stack

NVIDIA Dynamo 1.0. OpenShell. NemoClaw. Vera Rubin NVL72. If you were paying attention at GTC this year, you noticed something: the most significant announcements weren't about new models. They were about the infrastructure layer that makes models useful in production:

- the software frameworks that coordinate distributed inference

- the agent runtimes that keep autonomous systems running

- and the next-generation hardware that changes what's economically possible at scale.

Here's what those announcements have in common: none of them work without purpose-built AI servers and infrastructure underneath them. Dynamo's rack-scale coordination requires NVLink-connected GPU clusters with the fabric bandwidth to match. Vera Rubin's throughput-per-watt gains require liquid-cooled data center infrastructure designed for that hardware generation. OpenShell requires a reliable, low-latency compute substrate that a general-purpose cloud wasn't built to provide.

This is where GMI Cloud has been operating since before GTC. For over a year, our Inference Engine has been operational, empowering AI developers through a vertically integrated stack. By owning the entire infrastructure from the physical datacenter floor to the inference API, we provide the end-to-end optimization required to support leading-edge AI applications and agents.

The announcements at GTC validate GMI Cloud's mission to support AI builders everywhere.

Dynamo Inference: already in production

GMI Cloud is part of the initial cohort of cloud providers working with NVIDIA Dynamo, their production-grade, open-source inference platform. Our AI stack also supports NVIDIA OpenShell runtime, helping extend this foundation from high-performance inference orchestration to the runtime layer needed for long-running autonomous agents.

While much of the industry is excited about what Dynamo 1.0 and the new software layer make possible, GMI Cloud's Inference Engine has been delivering those outcomes to production workloads since last year. GMI Cloud empowers AI builders globally by providing a comprehensive suite of production-ready tools on its MaaS platform, which supports over 150 models including MiniMax, Qwen, Llama, and DeepSeek. This robust infrastructure features real-time monitoring, dedicated and serverless endpoints, and advanced optimizations such as quantization, speculative decoding, and auto-scaling.

The reason this is possible isn't software sophistication alone. It's that GMI owns the full stack: the data centers, the networking, the GPU clusters, and the inference layer on top. That vertical ownership is what enables the kind of end-to-end optimization such as throughput per GPU, cost per token, routing architecture, and more, that pure-play inference providers running on third-party clouds cannot replicate.

Dynamo 1.0 formalizes the coordination layer that GMI's infrastructure has been built to support. As an initial launch partner, GMI's inference stack is ready for Dynamo 1.0 and NVIDIA OpenShell from day one.

NemoClaw and OpenShell: the agent runtime layer, live at launch

NVIDIA OpenShell is the runtime layer for long-running autonomous agents, an infrastructure primitive that keeps agentic systems running coherently across time and context. NemoClaw extends that further into the model and fine-tuning stack. Both launched at GTC with Day-0 support on GMI Cloud.

Read more about NVIDIA Dynamo and NVIDIA OpenShell here.

Vera Rubin NVL72: 10x throughput per watt, on GMI's global network

NVIDIA Vera Rubin NVL72 delivers up to 10x higher inference throughput per watt compared to Blackwell. GMI Cloud is deploying Vera Rubin across our global datacenter network -- and again, the hardware advantage only materializes on infrastructure built to support it. Vera Rubin is a liquid-cooled, rack-scale platform. It requires purpose-built facilities with the power density and thermal management to run it correctly.

The first Vera Rubin deployment is the Kagoshima AI Factory, announced below. Additional rollout details across GMI's global footprint will follow as deployments come online.

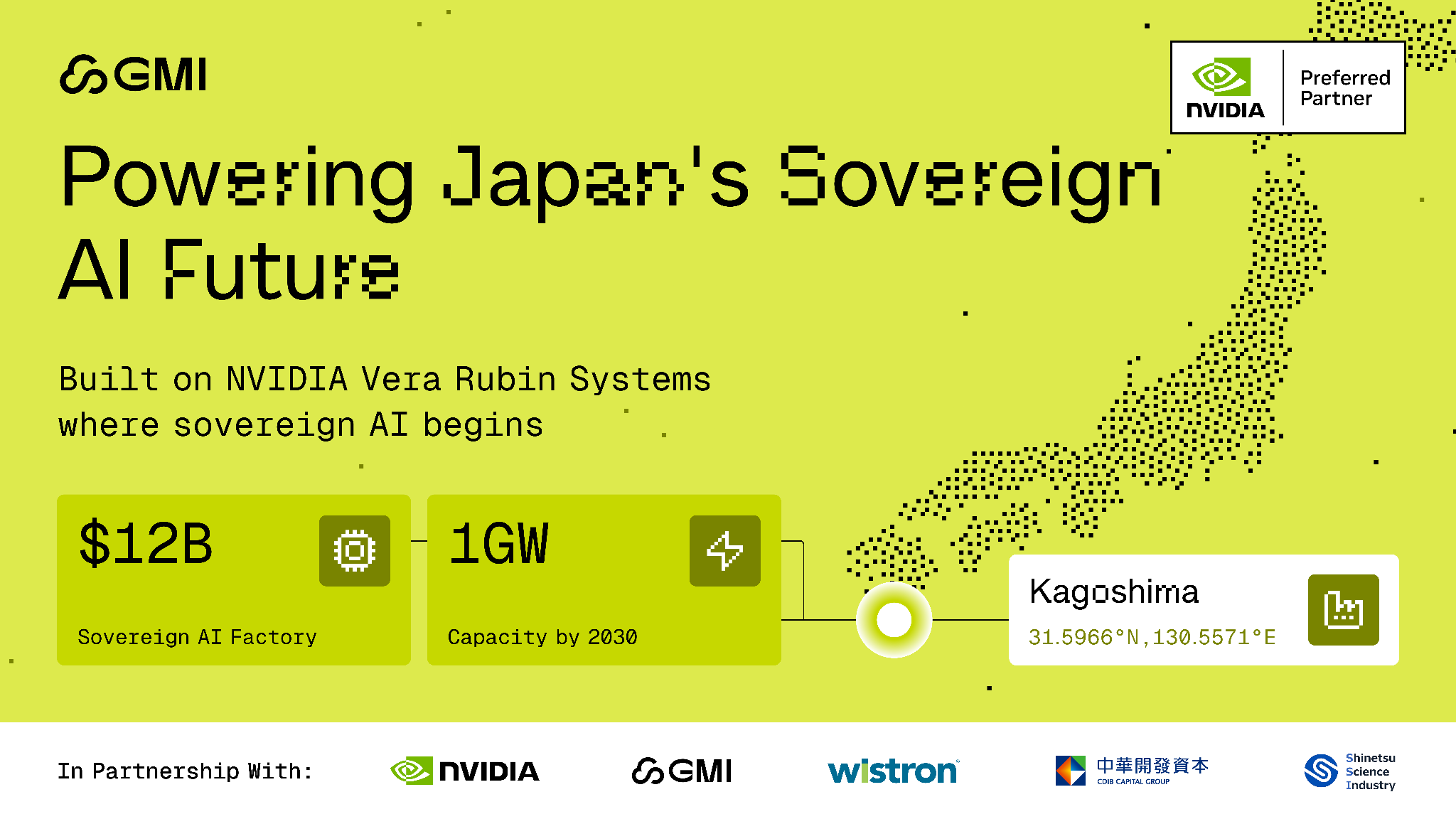

Kagoshima: Sovereign AI at 1GW Scale

The largest infrastructure announcement from GTC was the Kagoshima AI Factory, a $12B, 1GW facility at Circular Park in Satsumasendai, built on the site of a former power plant. Partners include CDIB Capital, Shinetsu Science Industry, Kai Shin Digital Infrastructure, Wistron Corporation, and the Kagoshima Prefectural Government, with official backing from Satsumasendai City.

At 36.8 hectares with dedicated transmission lines and 1GW of power capacity, this is Asia's largest sovereign AI project. The facility is purpose-built for physical AI workloads -- robotics, autonomous systems, and the advanced manufacturing applications that define Japan's industrial identity. Deployment is modular, beginning with the first 16 racks on Vera Rubin NVL72 and scaling toward full capacity by 2030.

The sustainability posture is designed in: approximately 65% of the facility's power will come from green and decarbonized sources including nuclear, solar, and hydropower, with ~80% of carbon credits available for offset use.

This isn't GMI's first sovereign AI deployment. The Taiwan AI Factory (TPE11) is already operational -- a 16 MW facility running over 7,000 GB300 liquid-cooled GPUs, with Phase 1 delivered Q2 2026 and full buildout completing Q4 2026. Kagoshima is what comes next.

The stack is the point

The pattern across everything GMI announced at GTC and everything NVIDIA announced that GMI is supporting is the same: the software layer and the hardware layer are not separable. Dynamo 1.0 and OpenShell don't perform on infrastructure that wasn't designed for them. Vera Rubin's efficiency numbers don't materialize in a general-purpose environment. Sovereign AI Factory scale doesn't happen without owned infrastructure, dedicated power, and the operational depth to run it.

GMI Cloud is building AI infrastructure for builders who understand that distinction. From the inference API to the datacenter floor, the stack is the product.

Build on GMI's MaaS platform at maas.gmicloud.ai

Colin Mo

Head of Content and Community

Build AI Without Limits

GMI Cloud helps you architect, deploy, optimize, and scale your AI strategies