AI video generation quickly becomes unstable without structured workflows, as maintaining temporal consistency, coordinating multiple steps, and managing multimodal elements requires system-level orchestration rather than isolated prompts.

Key things to know:

- Why AI video generation often breaks when treated as a single prompt-based process

- The difference between generating still images and maintaining continuity across video frames

- How structured workflows preserve character identity, lighting, camera angles and scene consistency

- The hidden complexity of AI video pipelines, including scripting, scene generation, motion interpolation and post-processing

- Why prompts alone struggle to manage high-dimensional and long-running video generation tasks

- How workflows introduce persistent state to maintain narrative and visual coherence across sequences

- The role of multimodal coordination between text, visuals, audio and metadata in video production

AI video generation looks deceptively simple from the outside. A prompt goes in, frames come out, and motion appears where there was none before. But anyone who has tried to use video generation beyond a demo quickly discovers how fragile the process is. Outputs drift in style, scenes lose continuity, characters change appearance, timing breaks, and results become impossible to reproduce.

These failures are rarely caused by the model alone. They stem from the absence of structured workflows. Video generation is inherently multi-stage, stateful and multimodal. Treating it as a single-shot inference problem almost guarantees breakdowns at scale.

For businesses, that breakdown translates directly into lost velocity: time wasted, GPU cycles burned, and inconsistent outputs that undermine both quality and cost efficiency.

Video is not just “images over time”

A common misconception is that video generation is simply image generation repeated across frames. In reality, video introduces constraints that images do not. Temporal consistency matters. Characters, lighting, camera angles and environments must persist across sequences. Small inconsistencies that are tolerable in still images become glaring when played back at speed.

Without workflows, these constraints are managed implicitly through prompts and manual fixes. That approach does not scale. Each new scene becomes an improvisation, and small changes ripple unpredictably through the output.

Structured workflows externalize these constraints. Instead of relying on the model to remember everything, workflows define what must stay constant and what can vary. This separation is critical for stability. It is also what protects the “good” in the classical quick–good–cheap equation: quality becomes structural rather than accidental.

The hidden complexity of AI video pipelines

Every serious video generation system involves multiple steps, even if they are hidden behind a single interface. Scripts are generated or refined. Scenes are decomposed. Frames or segments are produced. Motion is interpolated. Audio is aligned. Outputs are post-processed.

When these steps are collapsed into a single call, control is lost. Debugging becomes guesswork. If something goes wrong, teams rerun everything from scratch.

Workflows expose these stages explicitly. Each step becomes addressable, testable and repeatable. Teams can adjust one part of the pipeline without destabilizing the rest. This modularity is what makes AI video economically viable: instead of regenerating entire sequences, teams iterate surgically, preserving both GPU cost and production quality.

Why prompts collapse under video workloads

Prompts work best when the output space is small and loosely constrained. Video is neither. It is high-dimensional, long-running and sensitive to small variations.

A prompt that produces a compelling first frame may fail to maintain character identity across subsequent frames. A change intended to affect pacing may alter visual style unintentionally. As complexity increases, prompts become overloaded with instructions that conflict or degrade.

Structured workflows alleviate this by moving responsibility out of the prompt. Instead of encoding everything in language, workflows use configuration, references and state to guide generation. In workflow-first platforms like GMI Studio, this orchestration layer ensures that a prompt becomes one input among many, not fragile control systems holding the entire production together.

Temporal consistency requires state

One of the most common failure modes in AI video generation is loss of continuity. Characters subtly change proportions. Backgrounds shift. Lighting flickers. These issues arise because the system lacks persistent state.

Workflows introduce state explicitly. Reference frames, embeddings or constraints can be carried forward across steps. Each generation builds on the last in a controlled way.

This statefulness is essential for narrative coherence. Without it, video generation remains impressive but unreliable. With it, AI video can consistently deliver quality production-grade output, even as teams scale output volume.

Multimodality amplifies the problem

Video generation rarely exists alone. It is often combined with text, audio and metadata. Scripts drive scenes. Audio timing influences pacing. Captions and overlays must align with visuals.

Without workflows, coordinating these modalities becomes manual and error-prone. Outputs drift out of sync. Iteration becomes slow.

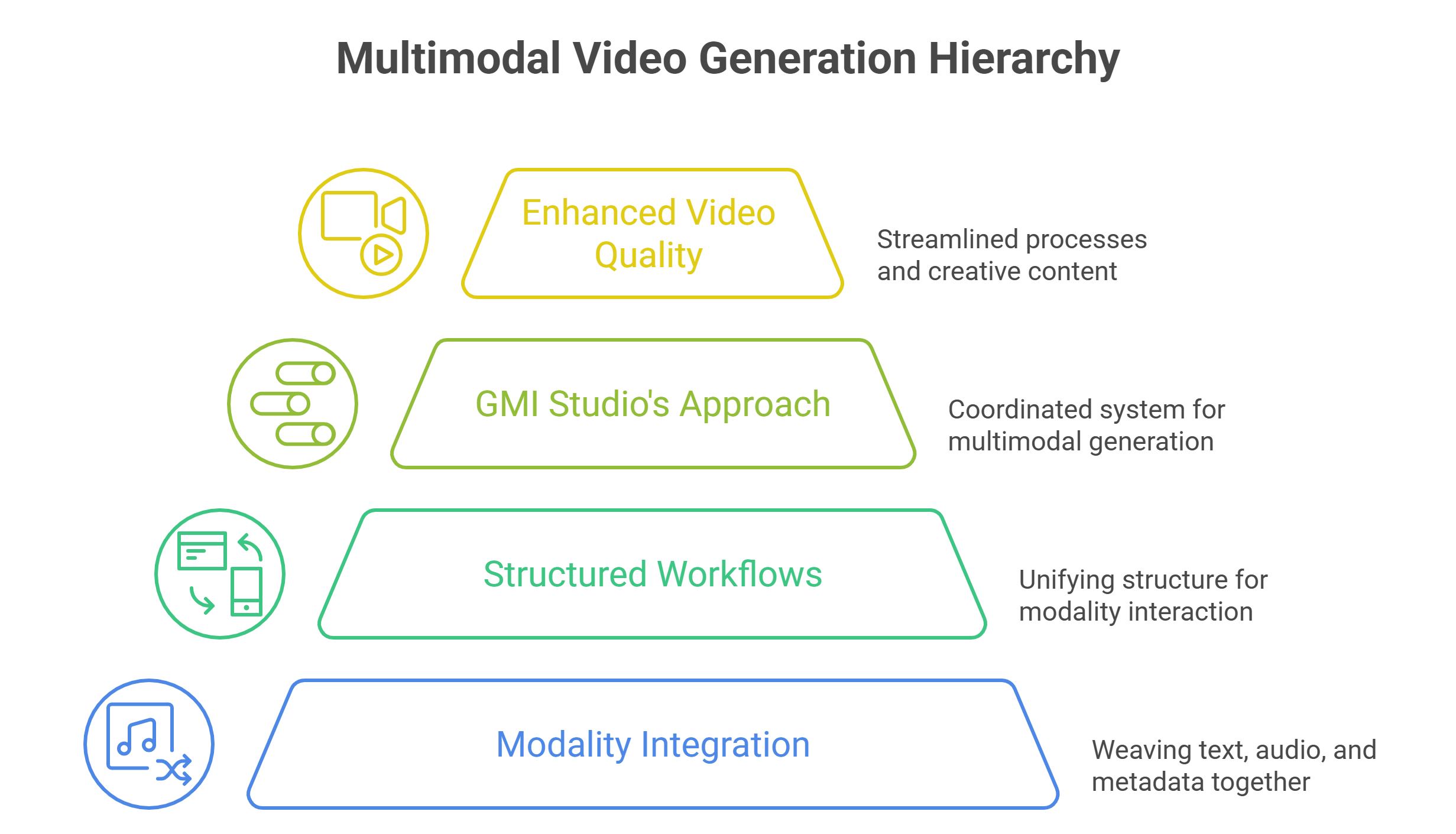

Workflows provide a unifying structure. Text, visual and audio components interact through defined interfaces. Changes propagate predictably. Teams can experiment without breaking alignment. This orchestration is central to how GMI Studio approaches video pipelines – treating multimodal generation as a coordinated system rather than isolated model calls.

Iteration without structure slows teams down

Creative iteration is essential in video production. But without structure, iteration becomes expensive. Teams rerun entire generations because one element changed. GPU time is wasted. Human time is lost waiting for results.

Workflows enable targeted iteration. Teams can adjust a single stage – such as scene description or motion parameters – without regenerating everything. Parallel execution allows multiple variations to be explored simultaneously.

This shift dramatically improves creative velocity. Instead of waiting, teams decide. AI video may not always be “instant”, but when structured correctly it is decisively good, cost-efficient and fast enough to outperform traditional production cycles by orders of magnitude.

Reproducibility is non-negotiable in production

For enterprises and studios, reproducibility is not optional. If a video asset needs to be regenerated, localized or revised, the process must be reliable.

Prompt-only approaches fail here. Small changes in wording or model behavior can lead to divergent results. Teams lose confidence in the system.

Workflows provide reproducibility by encoding the process itself. Versions can be tracked. Outputs can be audited. The same pipeline produces the same class of results.

This reliability is what allows AI video generation to move from experimentation into production.

Scaling video generation exposes infrastructure limits

Video generation is computationally expensive. High resolution, long sequences and multimodal processing demand parallel execution. Local or single-GPU setups quickly become bottlenecks.

Without structured workflows, scaling is chaotic. Tasks queue up. Resources idle. Performance degrades.

Workflow-native execution environments distribute work across multiple GPUs intelligently. Parallelism is built into the design. Throughput scales with demand. In platforms like GMI Studio, orchestration and infrastructure are integrated, allowing teams to scale video pipelines while preserving both quality and cost predictability.

Why creative teams need workflow-first platforms

Creative teams are not infrastructure teams. They should not have to manage GPUs, environments or orchestration to produce video.

Workflow-first platforms abstract this complexity while preserving control. Visual pipelines allow creators to design logic without code. Execution happens reliably in the background.

This separation empowers creativity. Teams focus on storytelling and design, not system maintenance. The business outcome is consistent velocity, with high-quality video assets delivered at a sustainable cost structure.

Building video systems that don’t collapse under scale

As AI video generation moves from experimentation to real production use, the tolerance for inconsistency drops to zero. Creative teams need to know that a sequence can be regenerated, revised, extended or localized without starting over or introducing visual drift. That level of confidence does not come from better prompts or larger models alone. It comes from treating video generation as a system.

Structured workflows turn video pipelines into repeatable processes rather than fragile one-off runs. They make it possible to lock visual identity, preserve temporal coherence, and evolve outputs incrementally instead of regenerating everything from scratch. Just as importantly, workflows create a shared foundation between creative teams and production environments, allowing experimentation without sacrificing reliability. This is the philosophy behind GMI Studio’s approach to AI video: orchestrated, reproducible pipelines that balance quality and cost while maintaining production-grade control.

AI video will continue to push hardware, models and creativity forward. The teams that succeed will not be the ones chasing the latest model release, but the ones building workflows that make complex generation predictable, scalable and production-ready.

Frequently Asked Questions About Why AI Video Generation Breaks Without Structured Workflows

Why does AI video generation often fail when using prompts alone?

Prompts work for simple outputs, but video generation involves many stages and dependencies. When everything is controlled only by a prompt, the system cannot maintain continuity across scenes, characters or timing, which leads to unstable and inconsistent results.

Why is AI video generation more complex than image generation?

Video requires temporal consistency across frames. Characters, lighting, environments and camera angles must remain stable while motion unfolds over time. Small inconsistencies that might go unnoticed in a single image become very obvious when viewed as a sequence.

How do structured workflows improve AI video generation?

Workflows break the generation process into clear stages such as scripting, scene generation, motion creation and post-processing. Each step becomes repeatable and adjustable, which allows teams to refine specific parts of the pipeline without rebuilding the entire video.

Why is state important in AI video generation workflows?

State allows the system to carry information forward between stages. Reference frames, visual constraints or embeddings can maintain character identity, lighting and scene details so that each segment builds on the previous one instead of drifting visually.

How do workflows support multimodal video production?

Video projects often involve text scripts, visual scenes and audio elements that must stay aligned. Workflows coordinate how these components interact so timing, narration and visuals remain synchronized during generation and iteration.

Build AI Without Limits

GMI Cloud helps you architect, deploy, optimize, and scale your AI strategies

FAQ