This article breaks down the most common GPU inference bottlenecks seen in production AI systems and explains how modern inference architectures are designed to prevent performance slowdowns before they impact users or costs.

From this article, you’ll learn:

- why GPU inference bottlenecks rarely have a single root cause

- how poor batching leads to underutilized GPUs and higher cost per request

- when aggressive batching creates queueing delays and latency spikes

- why sequential execution slows down multi-step and agentic inference pipelines

- how intelligent, workload-aware GPU scheduling stabilizes performance

- where memory pressure and fragmentation limit inference scalability

- why networking becomes a critical constraint in distributed inference systems

- how cold starts and slow scaling degrade performance during traffic spikes

- why lack of observability allows bottlenecks to persist unnoticed

- how adaptive, bottleneck-resistant architectures balance performance and cost

Inference performance rarely fails for a single reason. In most production AI systems, slowdowns emerge from a combination of architectural blind spots, inefficient execution patterns and infrastructure mismatches that only become visible under real traffic.

GPUs are powerful, but they are also unforgiving: when data, scheduling or orchestration falls out of sync, performance degrades quickly.

By 2026, inference workloads will dominate compute spend for many AI teams. That makes understanding and eliminating bottlenecks not just a performance exercise, but an economic one.

The following are the most common GPU inference bottlenecks seen in production systems today and how modern inference architectures avoid them.

1. Underutilized GPUs due to poor batching

One of the most common inefficiencies in inference systems is low GPU utilization caused by inadequate batching. Requests arrive individually, are executed immediately and leave most of the GPU’s parallel capacity unused.

This often happens in systems optimized exclusively for low latency without accounting for request volume. While immediate execution reduces response time, it increases cost-per-query dramatically when traffic is steady or bursty.

Avoiding this bottleneck requires adaptive batching. Instead of fixed batch sizes, inference systems should batch dynamically based on queue depth, latency tolerance and model characteristics. Well-designed batching strategies preserve responsiveness while dramatically improving throughput and GPU efficiency.

2. Queueing delays from naive request handling

At the opposite extreme, aggressive batching can introduce queueing delays that harm user experience. When systems wait too long to accumulate large batches, requests stall in queues, inflating tail latency.

This bottleneck often appears in throughput-first designs that lack awareness of request priority. Latency-sensitive traffic gets trapped behind background jobs, and p95 or p99 latency spikes unpredictably.

The solution is workload-aware scheduling. Requests must be classified and routed based on latency tolerance, model type and execution cost. Latency-critical traffic should bypass deep queues, while batch-friendly workloads can absorb short waits without issue.

3. Sequential execution of multi-step pipelines

Modern inference rarely consists of a single forward pass. Real systems chain together retrieval, reranking, generation, filtering and post-processing steps. When these stages execute sequentially on a single GPU, iteration slows dramatically.

This bottleneck is especially damaging in agentic systems, where multiple generations and evaluations occur per user interaction. Sequential execution compounds latency and wastes available compute capacity.

Parallel execution is essential. Multi-stage pipelines should distribute work across multiple GPUs, allowing stages to overlap rather than block each other. Parallelism shortens critical paths and increases overall system throughput without sacrificing responsiveness.

4. Inadequate GPU scheduling

Treating GPUs as interchangeable resources is another common mistake. Not all inference requests place the same demands on memory, compute or execution time. Routing everything to the same GPU pool leads to contention, fragmentation and unpredictable performance.

Scheduling bottlenecks arise when large-context requests monopolize memory, small jobs block behind expensive ones or GPUs sit idle while others are overloaded.

Effective inference systems schedule workloads based on model size, batch characteristics, memory footprint and real-time availability. Intelligent scheduling increases utilization while stabilizing latency across mixed workloads.

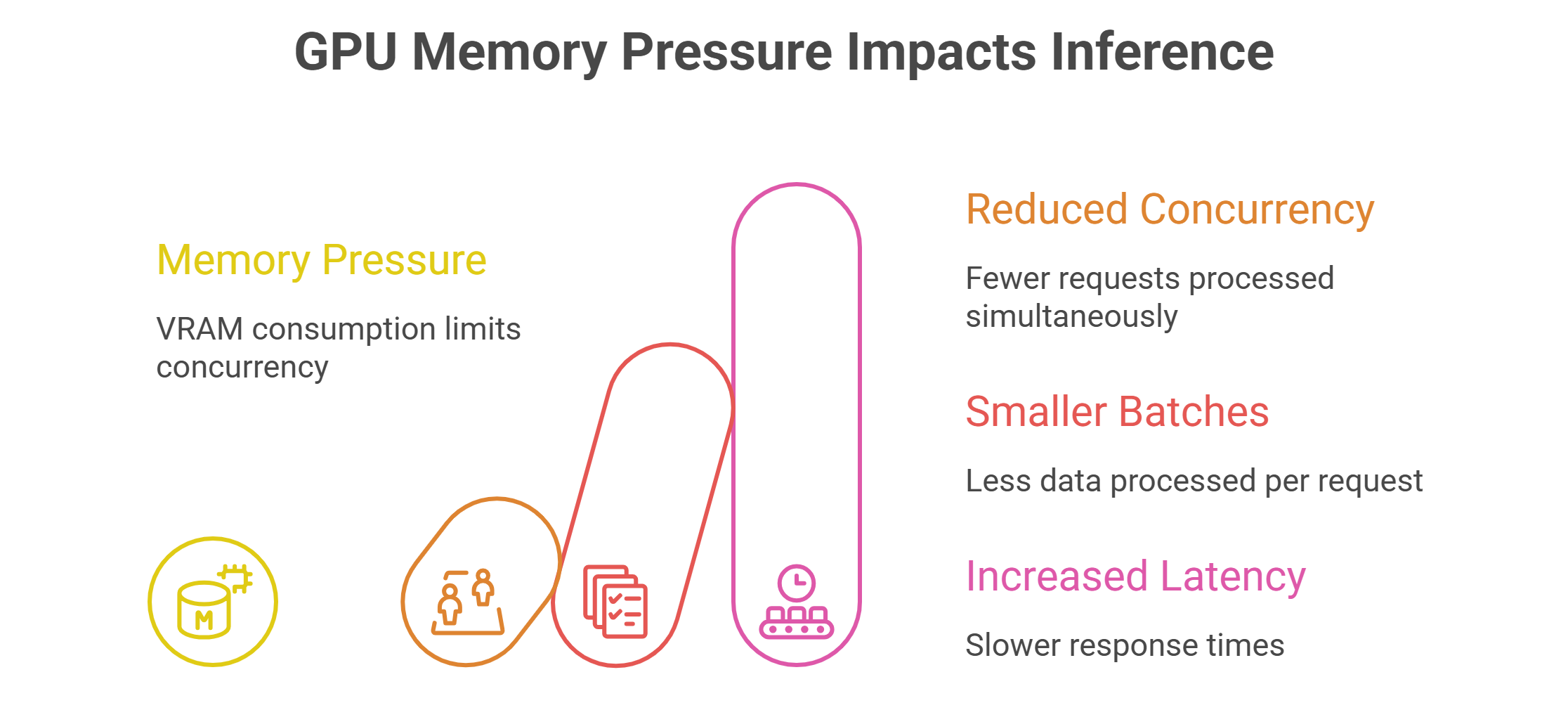

5. Memory pressure and fragmentation

GPU memory is often the hidden constraint in inference performance. Large context windows, multimodal inputs and KV caches quickly consume VRAM, limiting concurrency and forcing smaller batch sizes.

Fragmentation compounds the problem. Even when total free memory appears sufficient, fragmented allocation can prevent efficient execution.

Avoiding memory bottlenecks requires careful management of model placement, batch sizing and cache lifecycles. Some systems benefit from separating models across GPU pools or using fractional GPUs for smaller workloads to preserve headroom for larger requests.

6. Networking limitations in distributed inference

As inference pipelines scale across multiple GPUs and nodes, networking becomes a critical factor. Slow interconnects introduce latency when transferring embeddings, activations or intermediate results between stages.

This bottleneck is often overlooked during early deployment but becomes unavoidable as systems grow. Distributed inference pipelines demand high-bandwidth, low-latency networking to maintain performance.

Inference architectures must be designed with networking in mind, minimizing unnecessary data movement and colocating tightly coupled stages where possible. High-speed interconnects are not optional at scale.

7. Cold starts and slow scaling

Inference workloads are often bursty. Traffic spikes unexpectedly, then recedes. Systems that cannot scale GPU capacity quickly suffer from cold starts, dropped requests or degraded latency during peaks.

This bottleneck is common in static deployments or platforms with slow provisioning. Even brief scaling delays can translate into poor user experience.

Avoiding this requires fast, elastic scaling mechanisms that can spin up GPU capacity quickly and route traffic intelligently. Predictive scaling based on historical patterns can further reduce cold-start impact.

8. Lack of observability into inference behavior

Many inference bottlenecks persist simply because teams cannot see them clearly. Without detailed visibility into GPU utilization, queue depth, batching efficiency and latency distribution, optimization becomes guesswork.

High-level metrics hide localized issues such as uneven GPU load, pathological batching or memory fragmentation. These problems often surface only in tail latency or cost anomalies.

Production-grade inference systems require fine-grained observability that correlates performance metrics with infrastructure behavior. Without it, bottlenecks are discovered too late – usually through user complaints or cost overruns.

9. Cost inefficiencies caused by static allocation

Inference bottlenecks are not always about speed. Static GPU allocation often leads to paying for capacity that sits idle or fails to align with actual workload patterns.

Reserved resources can reduce per-hour costs, but when workloads fluctuate, unused GPUs become stranded capacity. On-demand resources offer flexibility but require intelligent orchestration to avoid overspending.

Modern inference architectures balance these models dynamically, aligning cost structure with workload behavior rather than forcing teams into one-size-fits-all provisioning.

Designing bottleneck-resistant inference systems

Avoiding inference bottlenecks is less about tuning a single parameter and more about designing systems that adapt continuously. Effective architectures combine adaptive batching, intelligent scheduling, parallel execution and elastic scaling into a cohesive control plane.

The most resilient systems treat inference as a living workload, not a static deployment. They observe behavior in real time, adjust execution strategies dynamically and evolve alongside application demands.

As inference continues to dominate AI economics, teams that proactively eliminate bottlenecks gain a decisive advantage – not just in performance, but in cost control and iteration speed.

GMI Cloud enables this approach by providing inference-optimized GPU infrastructure with intelligent scheduling, high-bandwidth networking and elastic scaling designed to surface and eliminate bottlenecks before they limit performance.

Frequently Asked Questions About Common GPU Inference Bottlenecks (and How to Avoid Them)

1. Why do GPU inference slowdowns usually come from multiple issues at once?

In production, performance rarely breaks for a single reason because data movement, batching, scheduling and orchestration all interact under real traffic. When any of these layers fall out of sync, GPUs can’t stay efficiently busy and latency or cost problems show up fast.

2. How does poor batching lead to underutilized GPUs during inference?

If requests are handled one by one and executed immediately, most of the GPU’s parallel capacity sits unused, so utilization stays low and cost per query rises. Adaptive batching avoids this by forming batches dynamically based on queue depth, latency tolerance and model characteristics.

3. Why can aggressive batching create queueing delays and worse tail latency?

When a system waits too long to build large batches, requests pile up in queues and p95/p99 latency can spike unpredictably. Workload-aware scheduling reduces this by classifying requests and letting latency-sensitive traffic bypass deep queues while batch-friendly jobs tolerate short waits.

4. What makes multi-step inference pipelines slow when they run sequentially?

Many inference workloads chain retrieval, reranking, generation, filtering and post-processing, and running these stages in strict sequence stretches the critical path. Parallel execution helps by distributing stages across multiple GPUs so work can overlap instead of blocking.

5. Why is GPU scheduling a bigger lever than people expect?

Inference requests don’t all consume the same memory, compute or time, so treating GPUs as interchangeable can cause contention, fragmentation and unstable latency. Smarter scheduling routes work based on model size, batch traits, memory footprint and real-time availability to keep utilization high without unpredictable slowdowns.

Build AI Without Limits

GMI Cloud helps you architect, deploy, optimize, and scale your AI strategies

FAQ

.jpg)